SLURM Cluster Creation

Overview

Slurm (Simple Linux Utility for Resource Management) is a workload manager used for high-performance computing (HPC), job scheduling, and batch processing on allocated compute resources.

In Bridge, you can create and manage Slurm clusters, submit and monitor jobs from the UI, and view cluster resources and the Slurm dashboard.

This guide covers:

- Creating a Slurm cluster (name, version, worker and master node selection)

- Monitoring cluster creation and viewing master/worker details

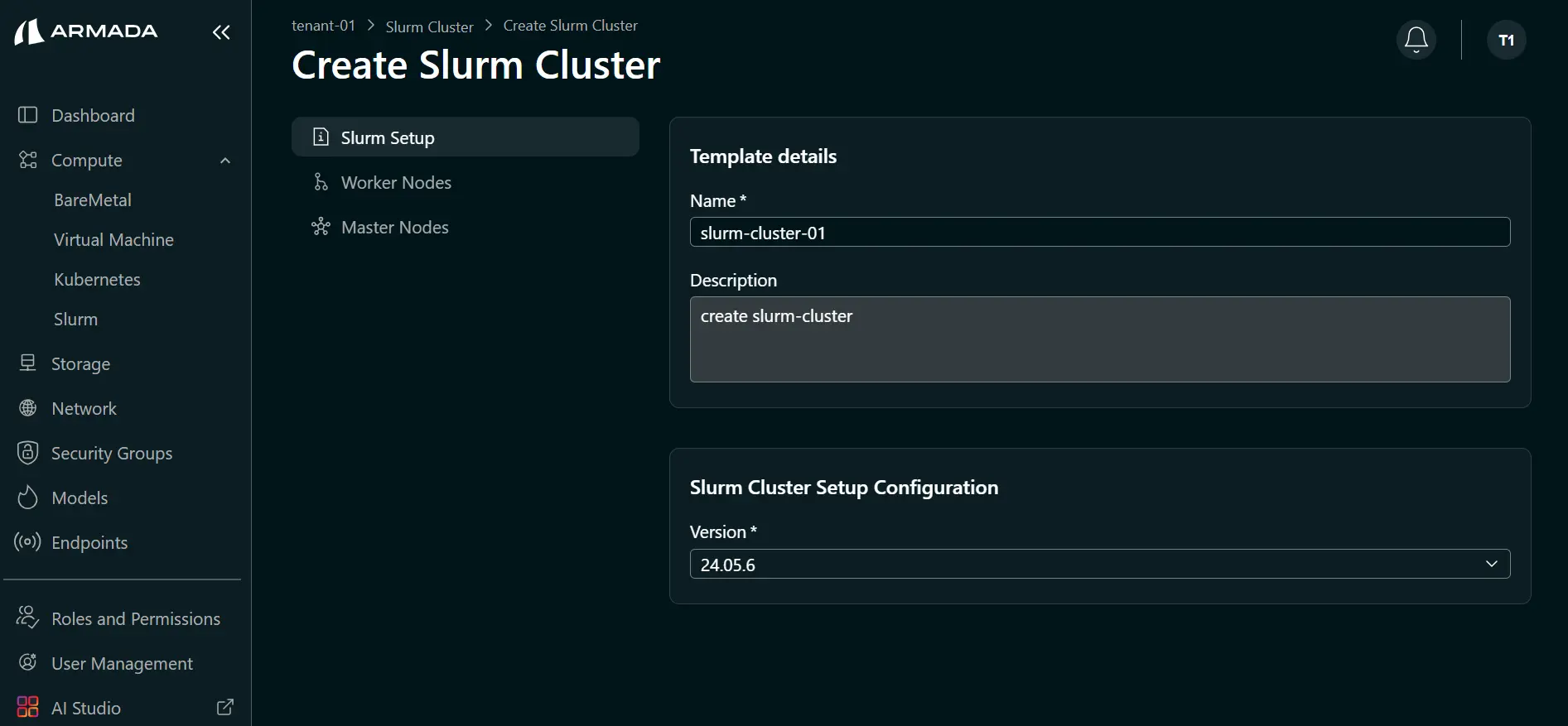

Bridge supports Slurm cluster creation using Slurm version 24.05.6. This version is available when you create a cluster (see Step 2: Configure Cluster).

Prerequisites

- Tenant Admin access — Log in as a Tenant Admin to create and manage Slurm clusters.

- Bare Metal or Virtual Machine resources allocated to your tenant

- Minimum two nodes (Bare Metal or VM) available — one for the master node and at least one for worker nodes

Create Slurm Cluster

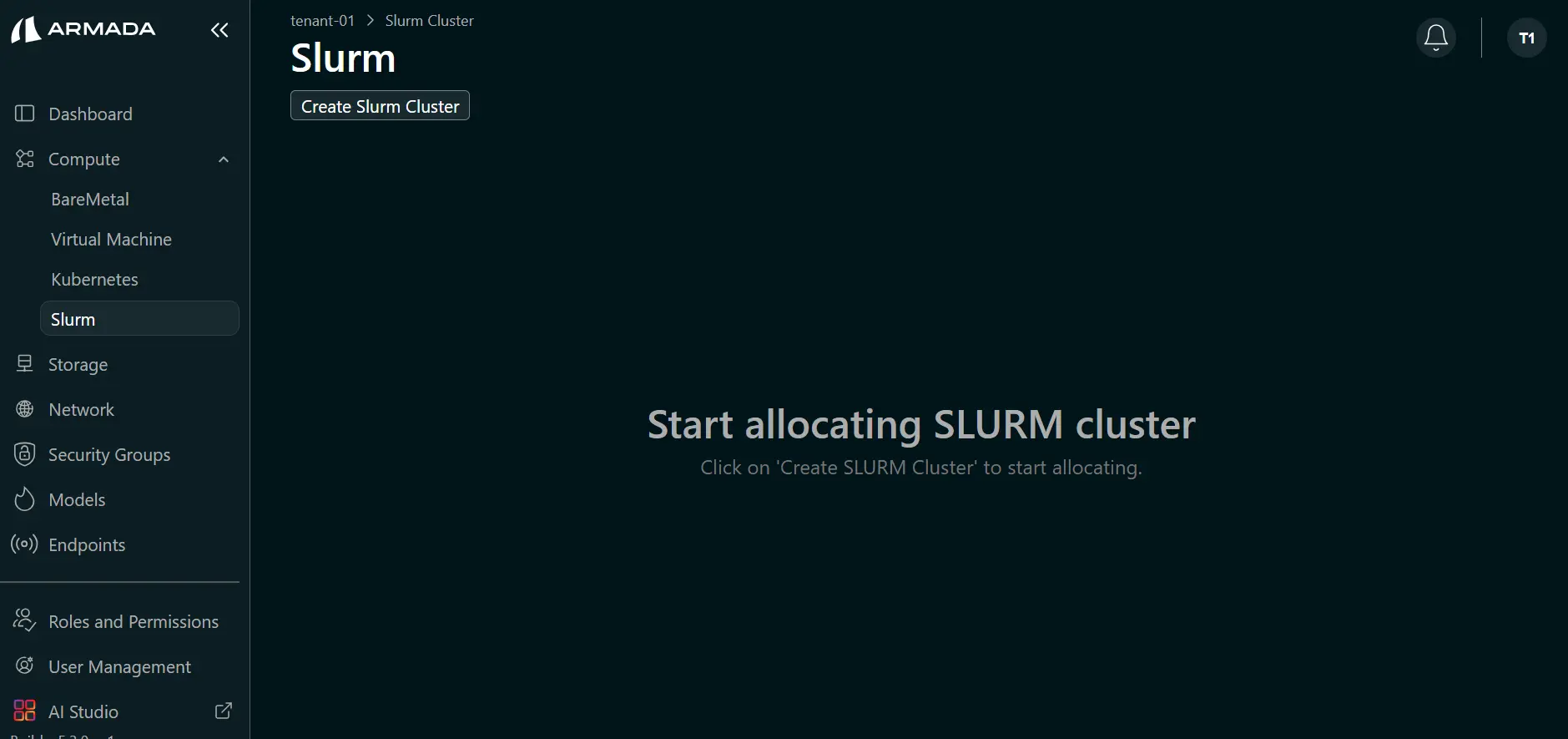

Step 1: Navigate to Slurm

- In the left sidebar, open Compute → Slurm.

- Click Create Slurm Cluster.

Step 2: Configure Cluster

- Enter the Slurm cluster name (e.g.,

slurm-cluster-01) and Description (e.g., Creating Slurm cluster). - Select the Version (e.g., 24.05.6) from the dropdown.

- Click Next.

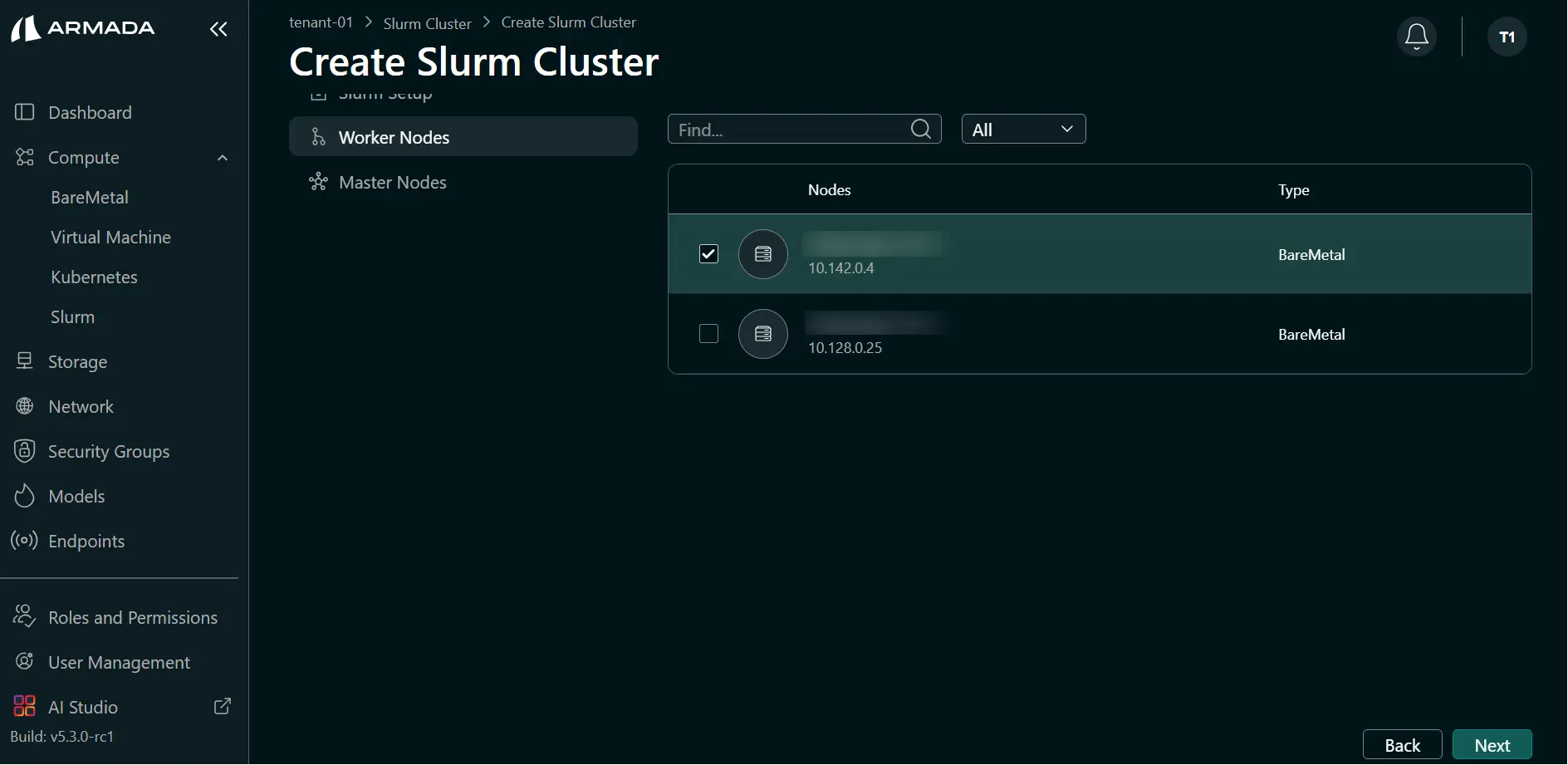

Step 3: Worker Node Selection

- Select one or more worker nodes.

- Click Next.

At least one worker node is required to create a Slurm cluster. You can select Bare Metal or Virtual Machines.

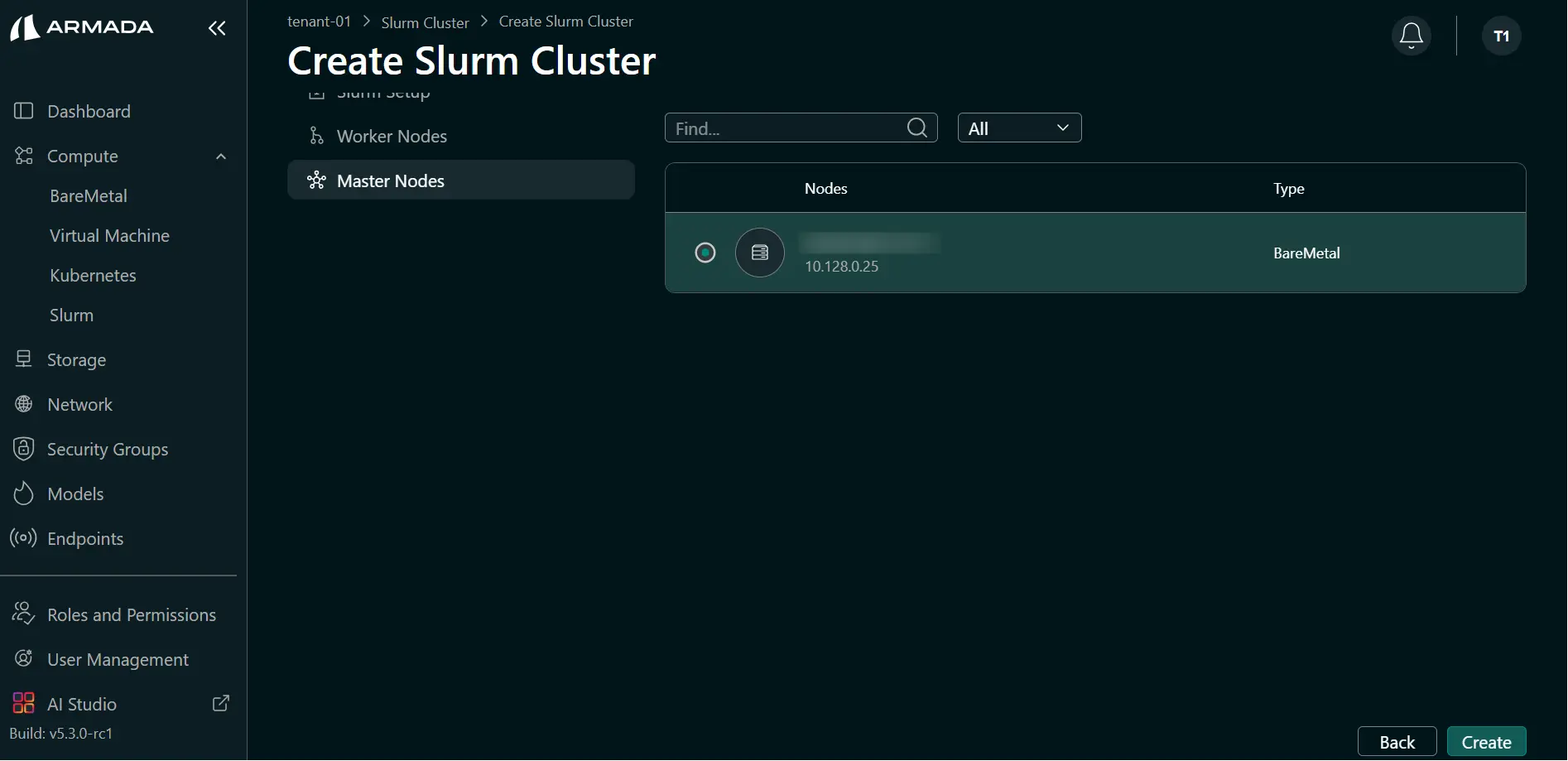

Step 4: Master Node Selection

- Select the master node.

- Click Create to start Slurm cluster creation.

Only one master node is required. You can select a Bare Metal or Virtual Machine.

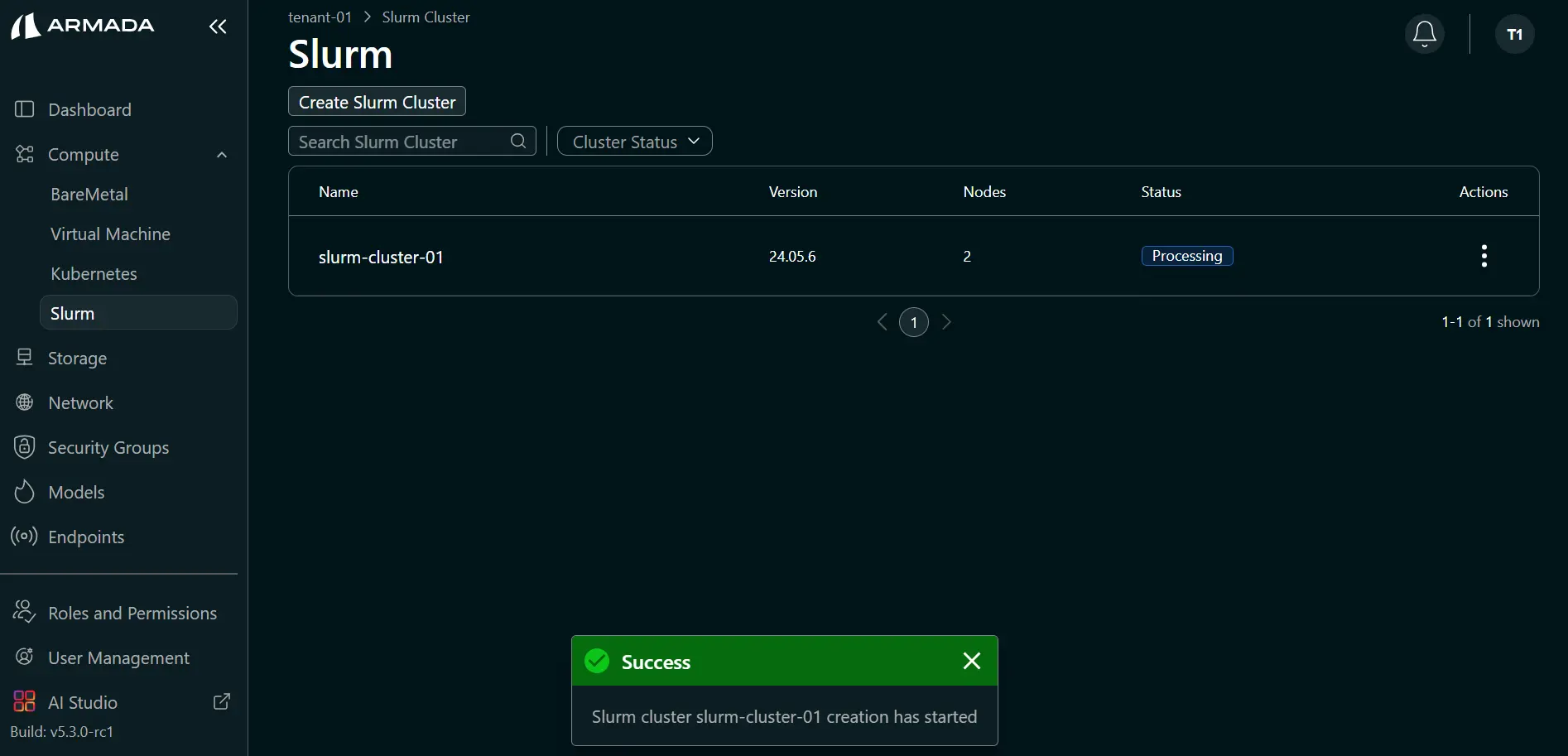

Step 5: Monitor Cluster Creation

- After creation starts, the cluster state shows Processing.

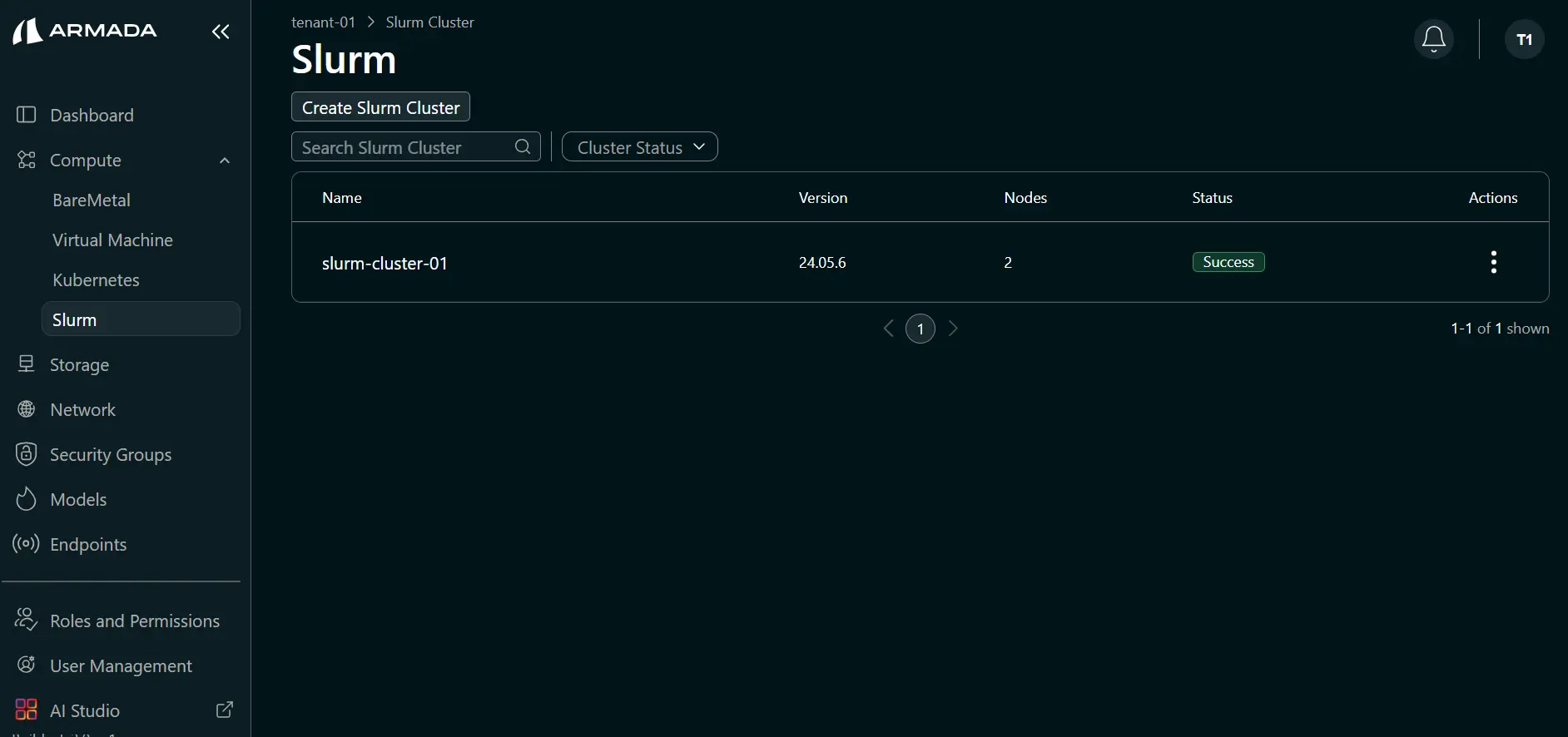

- When creation completes successfully, the status shows Success.

Cluster creation typically takes around an hour, depending on the compute nodes or virtual machines used.

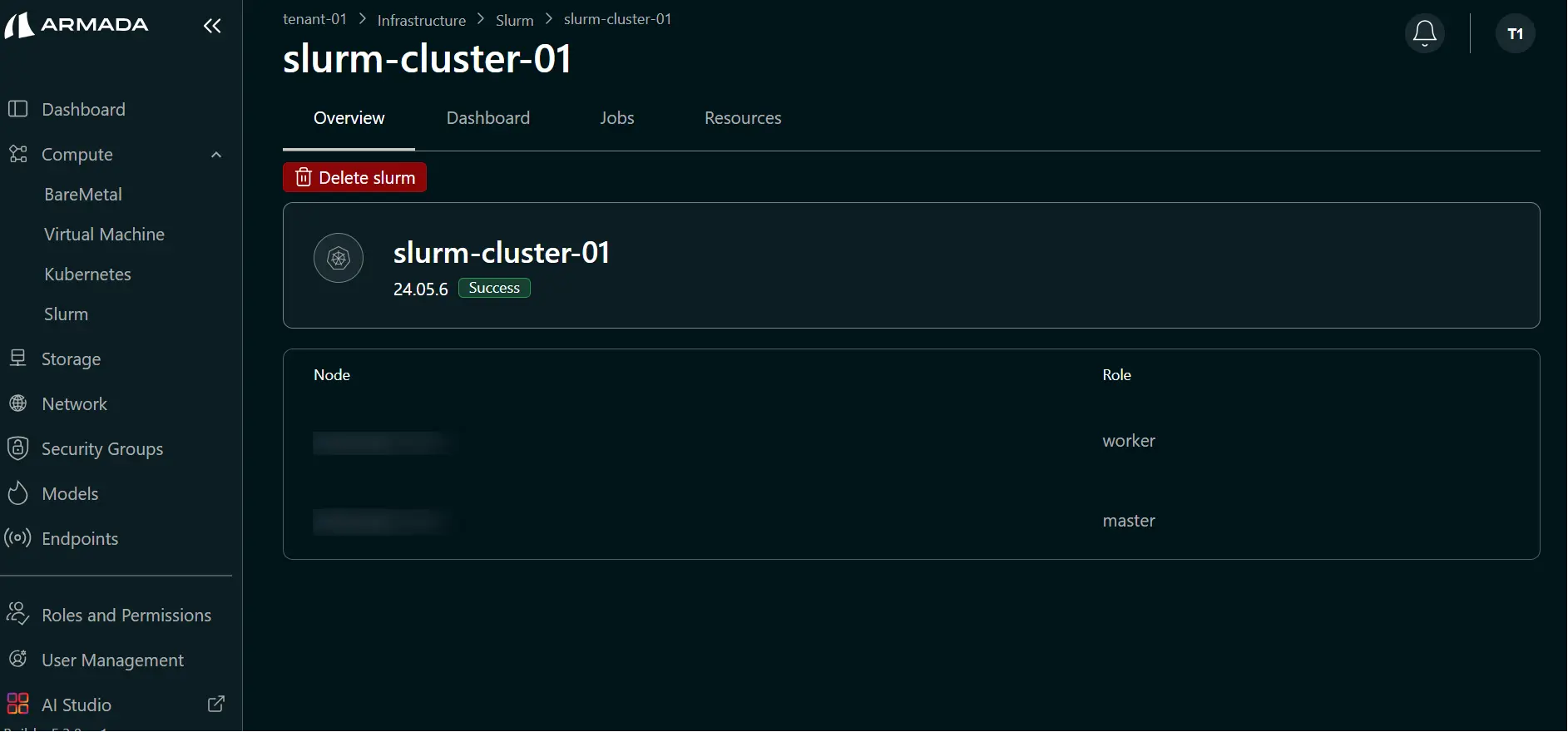

Step 6: View Master and Worker Details

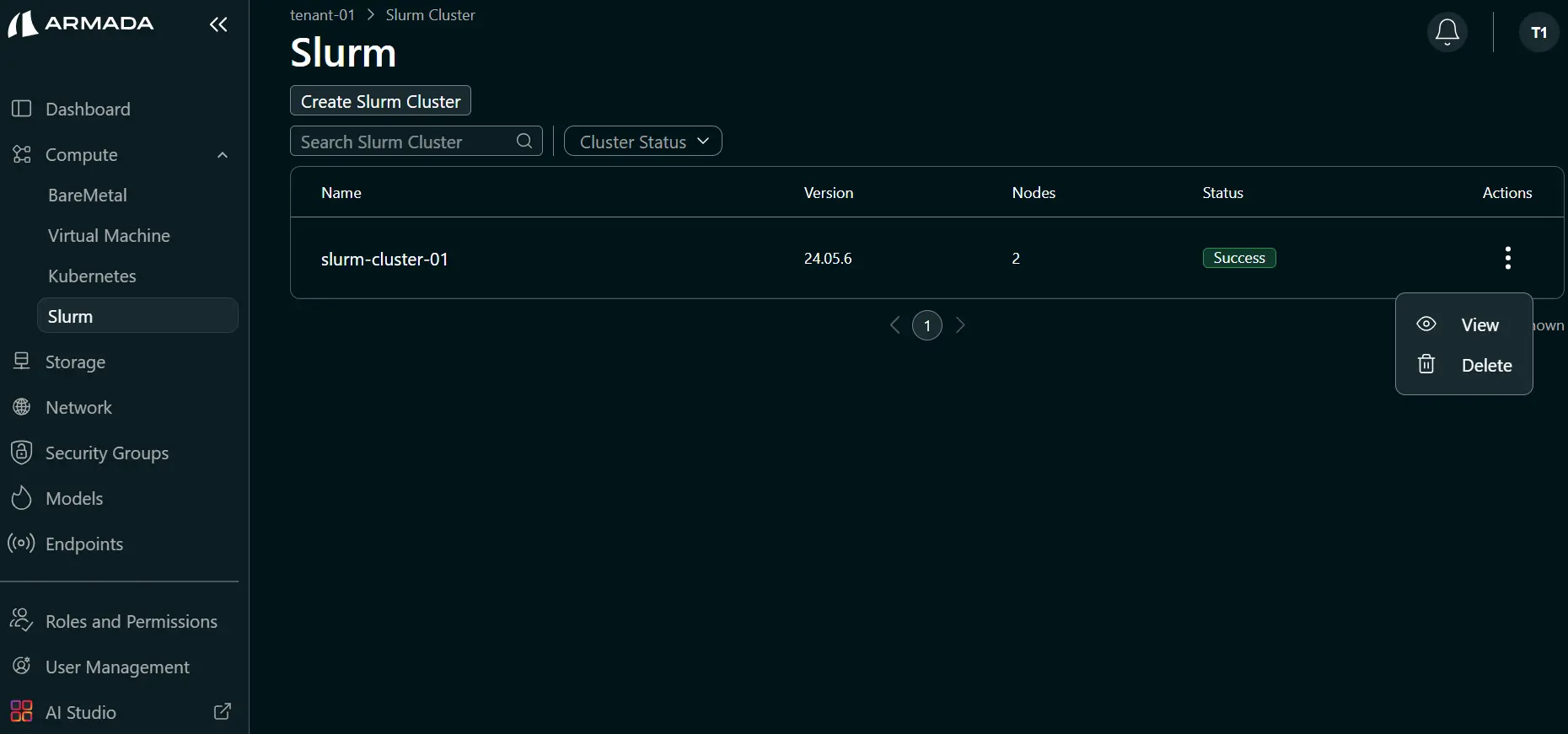

- Click the ellipsis (three-dot) menu for the slurm cluster.

- Click the View (eye icon) to see the Slurm master and worker node information.

Common Use Cases

- Batch processing — Run batch jobs across multiple nodes

- HPC workloads — Execute high-performance computing tasks

- Parallel computing — Distributed computing tasks

- Machine learning training — GPU-accelerated ML jobs