Create and Access Workspace

JupyterHub in Bridge provides interactive notebook environments where tenant users can write code, analyze data, and develop machine learning models with access to GPU, CPU, or MIG (Multi-Instance GPU) resources.

Each server runs in an isolated environment. You can create multiple servers with different profiles depending on your workload.

Prerequisites

- A JupyterHub cluster exists — created by the Tenant Admin using the JupyterHub with KAI Scheduler cluster template

- You have a tenant user account — created by a Tenant Admin

- MIG profile configured (optional) — required only for the Environment with MIG GPU access profile

Step 1: Open AI Studio

-

Log in to Bridge as a tenant user.

-

In the left sidebar, click AI Studio. A new tab opens.

-

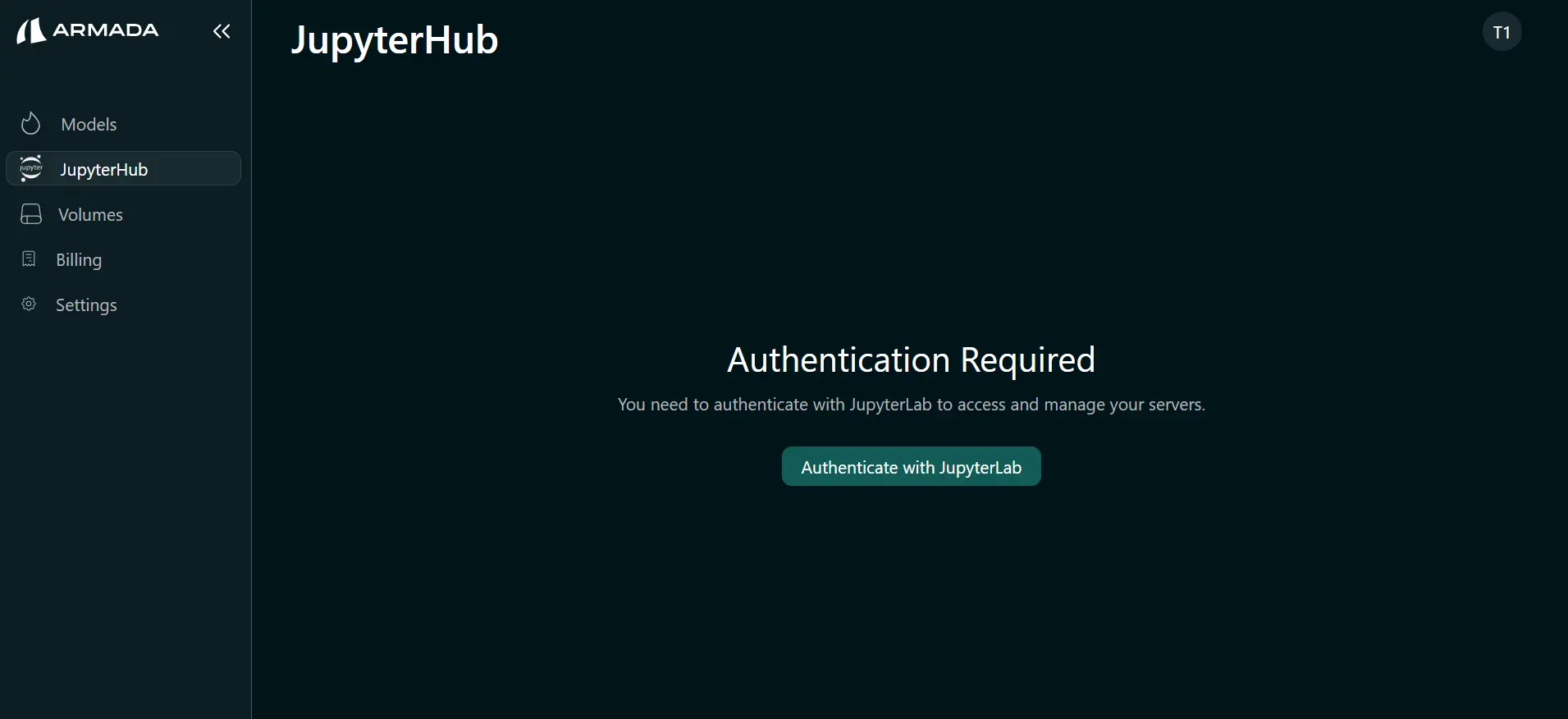

In the AI Studio sidebar, click JupyterHub. The JupyterHub page opens showing Authentication Required.

Step 2: Authenticate with JupyterLab

Authentication is required once per tenant user. After authenticating, you can create and manage servers without repeating these steps.

-

Click Authenticate with JupyterLab.

-

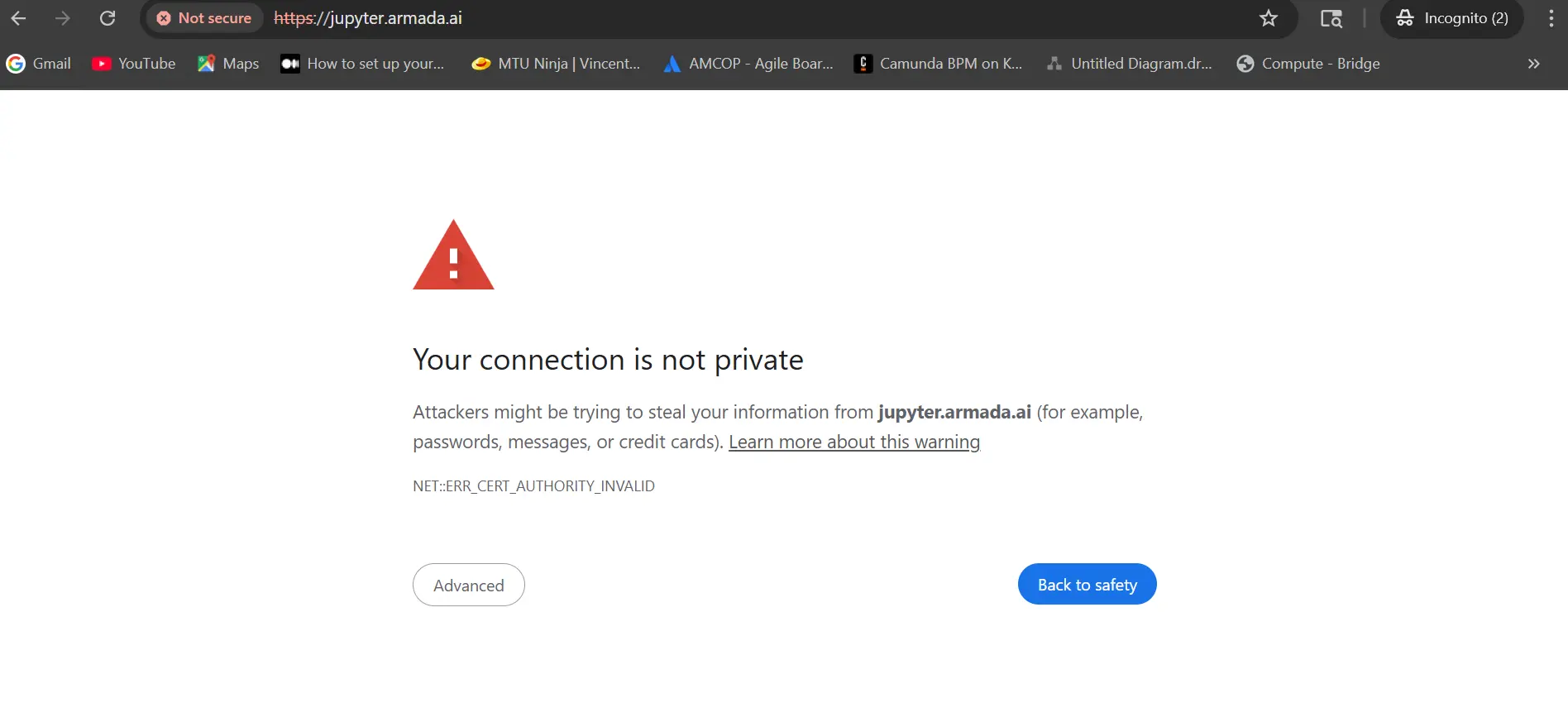

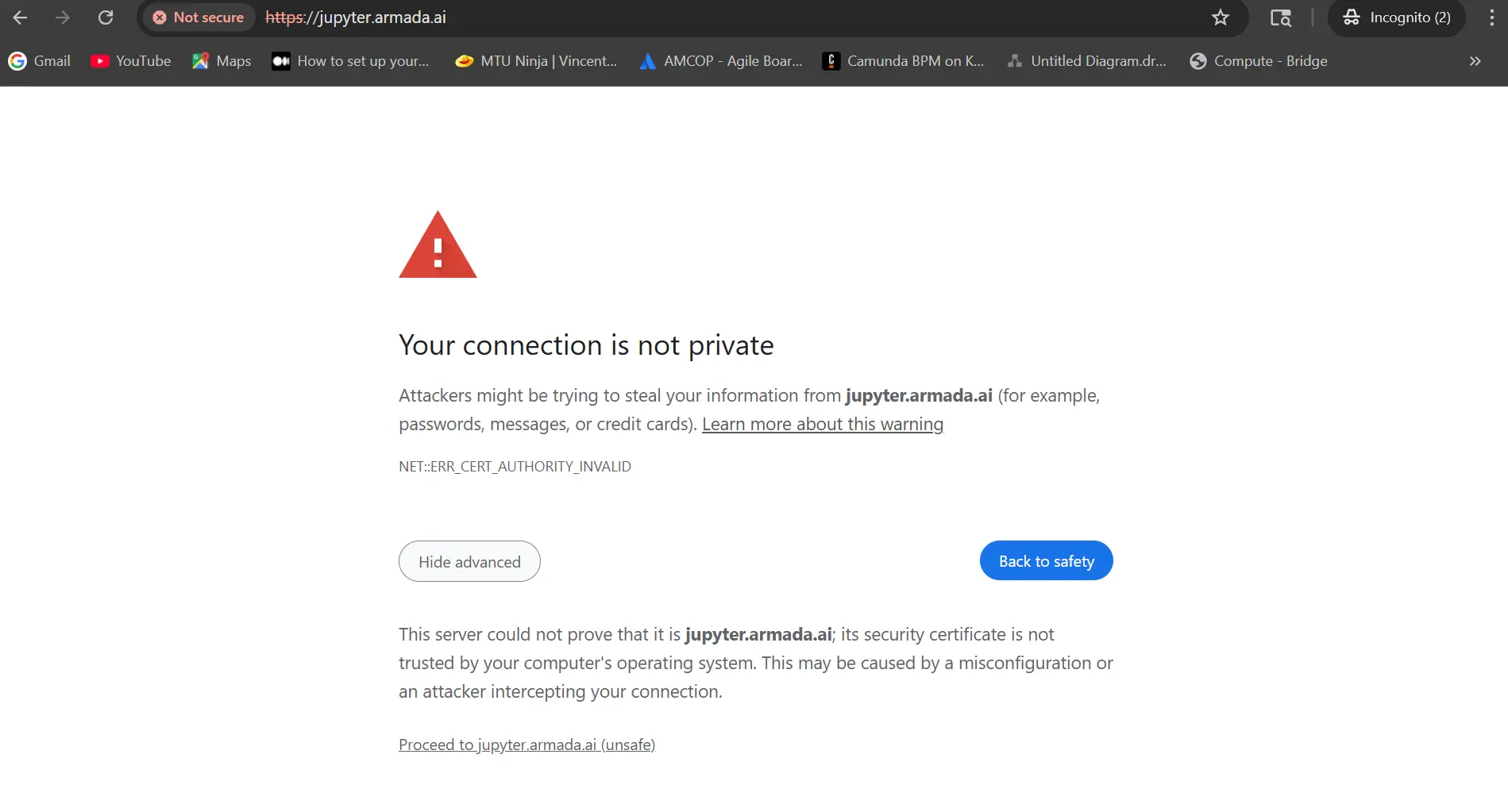

The browser opens a security warning (when the JupyterHub endpoint uses a self-signed or untrusted certificate). Click Advanced.

-

Click Proceed to jupyter.armada.ai (unsafe) (or the equivalent for your browser).

-

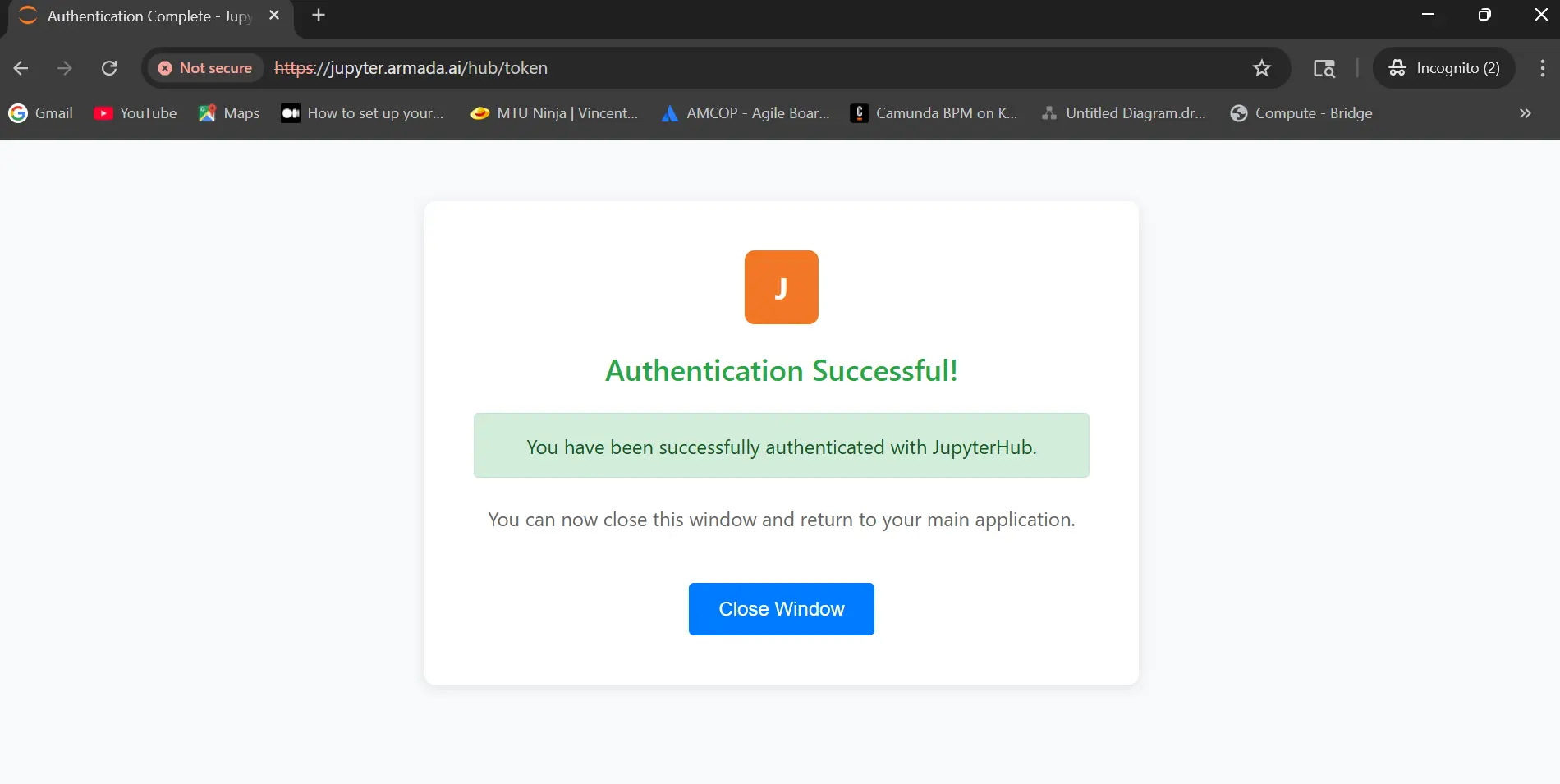

The page shows Authentication Successful. Click Close Window.

-

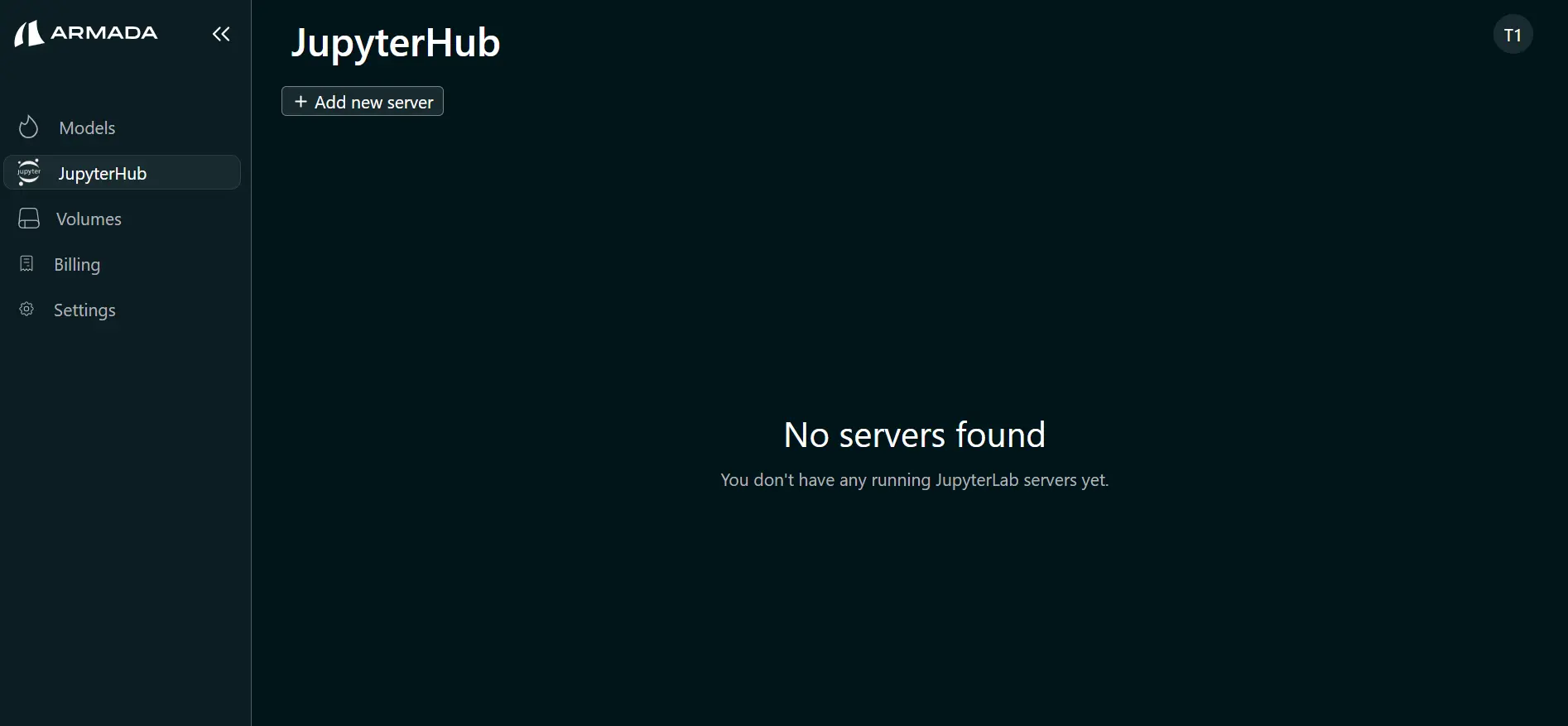

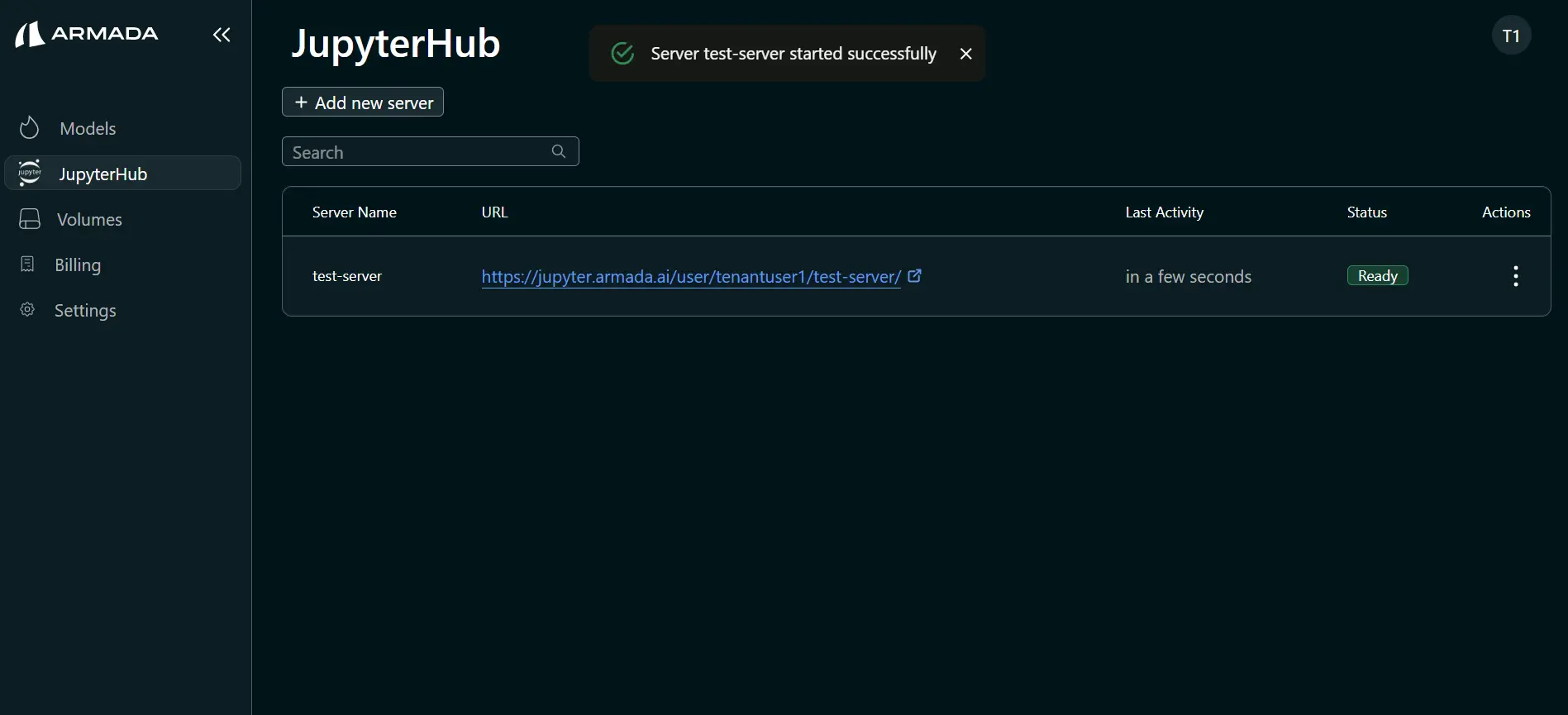

Return to the JupyterHub tab. You should now see the Add new server option.

Step 3: Create a Workspace

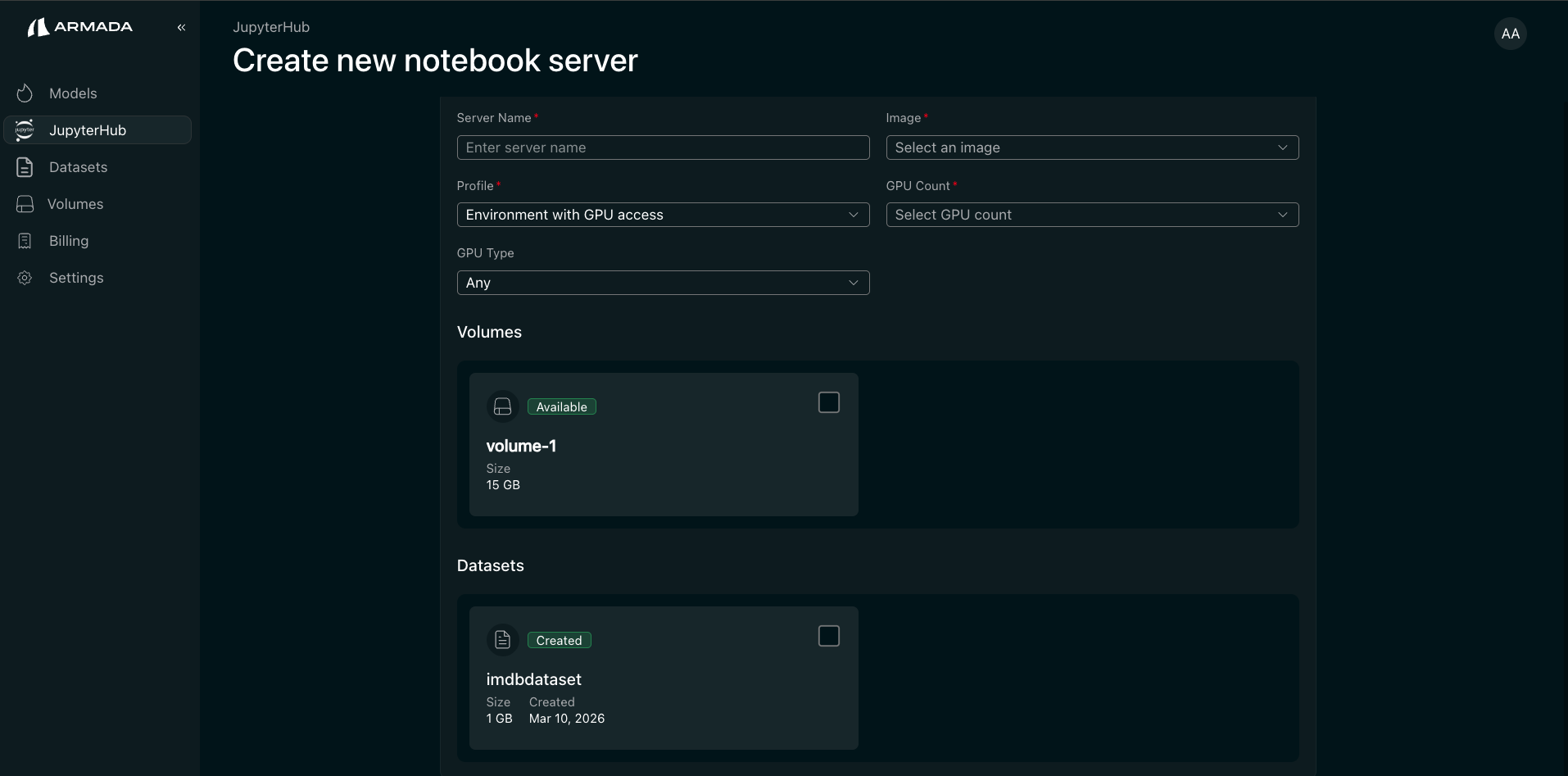

- Click + Add new server.

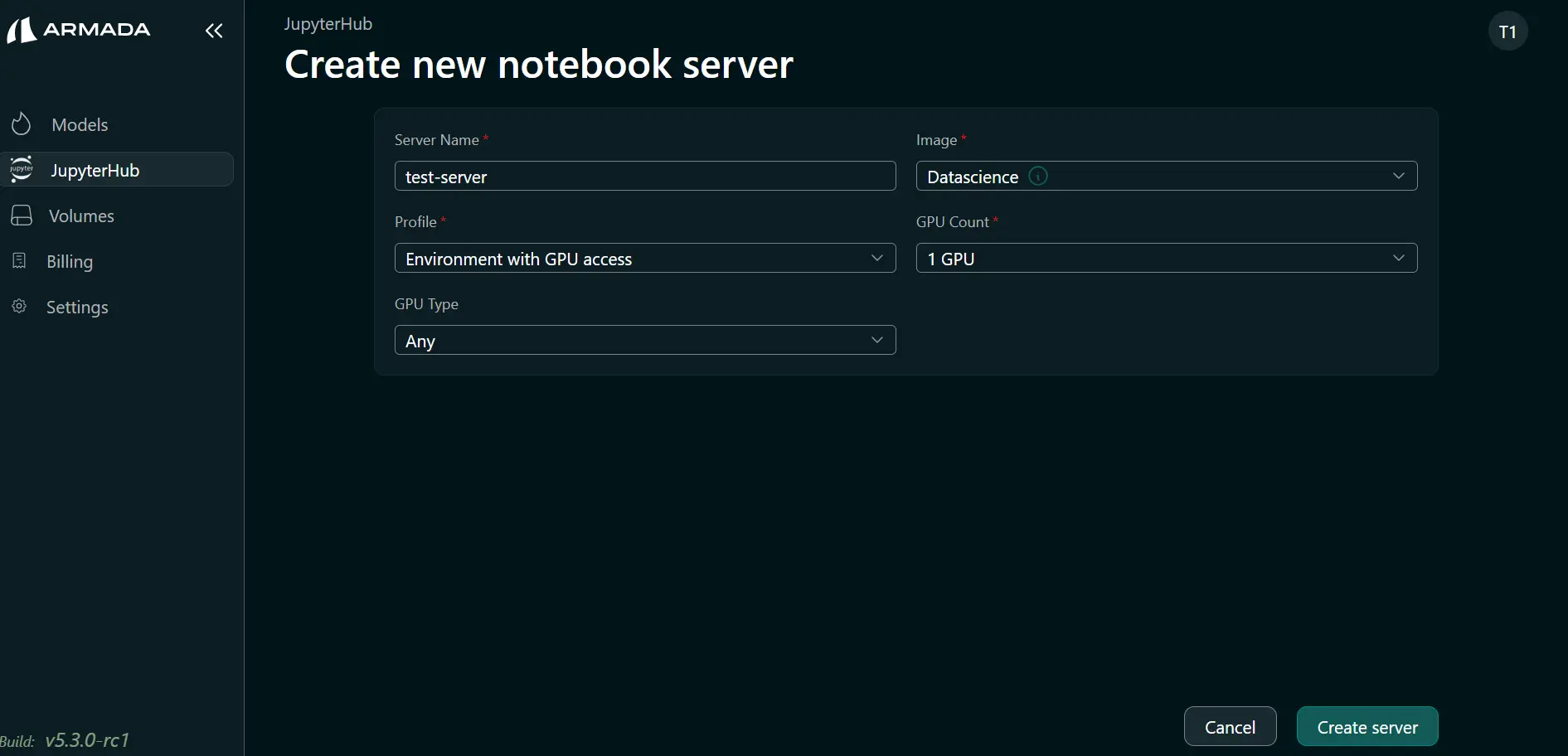

- Enter a Server name and select an Image from the dropdown.

- Choose a Profile that matches your workload:

| Profile | Resources | Use case |

|---|---|---|

| Environment with GPU access | Full GPU | ML training, CUDA, deep learning |

| Environment with CPU | CPU only | Data analysis, scripting, light computation |

| Environment with MIG GPU access | GPU partition | Workloads needing a fraction of a GPU |

-

If you selected profile Environment with GPU access, then select GPU count as needed.

-

If you selected profile as Environment with MIG GPU access, then select the desired MIG Profile.

-

Click Create Server.

-

Wait until the server status is Ready.

Datasets and volumes with JupyterHub

Datasets and volumes with JupyterHubIf the Admin has imported the NFS server, you can create volumes and datasets in AI Studio and attach them when creating a JupyterHub server. The server is then created with access to the chosen datasets and volumes so you can train and run inference on your model and data. Volume data is persisted and can be reused when you create new JupyterHub servers.

Open JupyterLab and Verify GPU Access

-

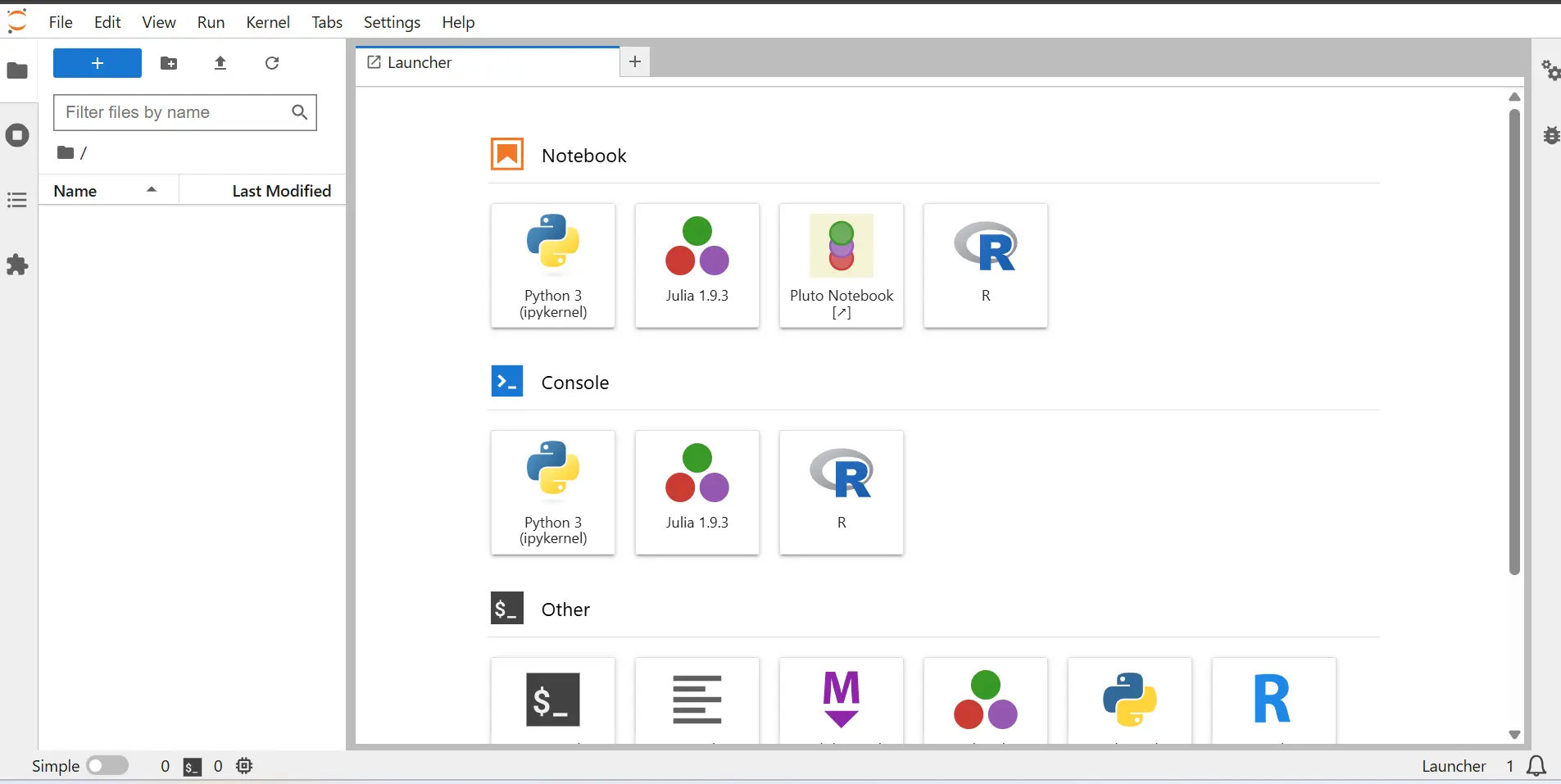

Click the URL shown for your server. JupyterLab opens in a new tab.

-

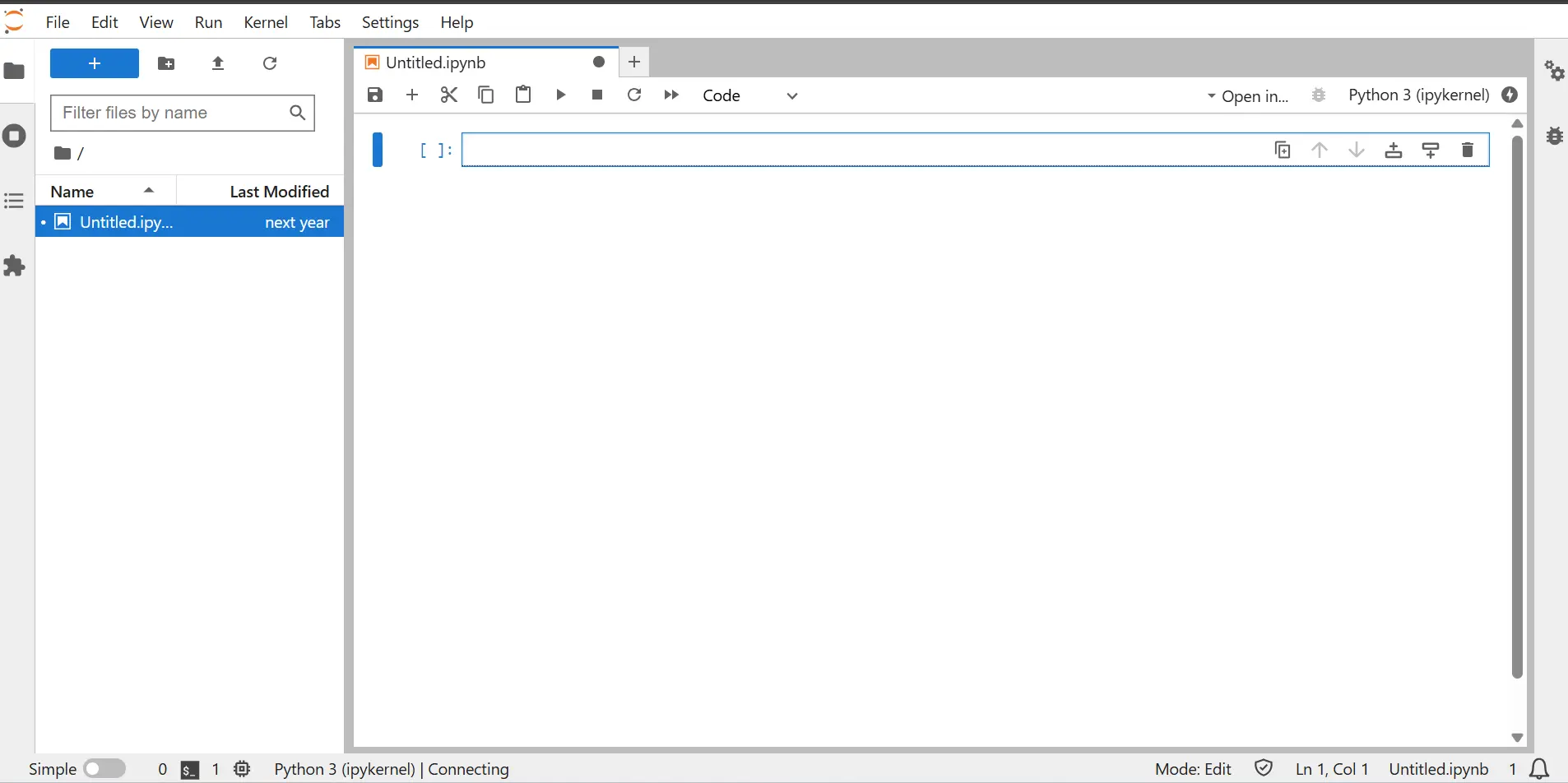

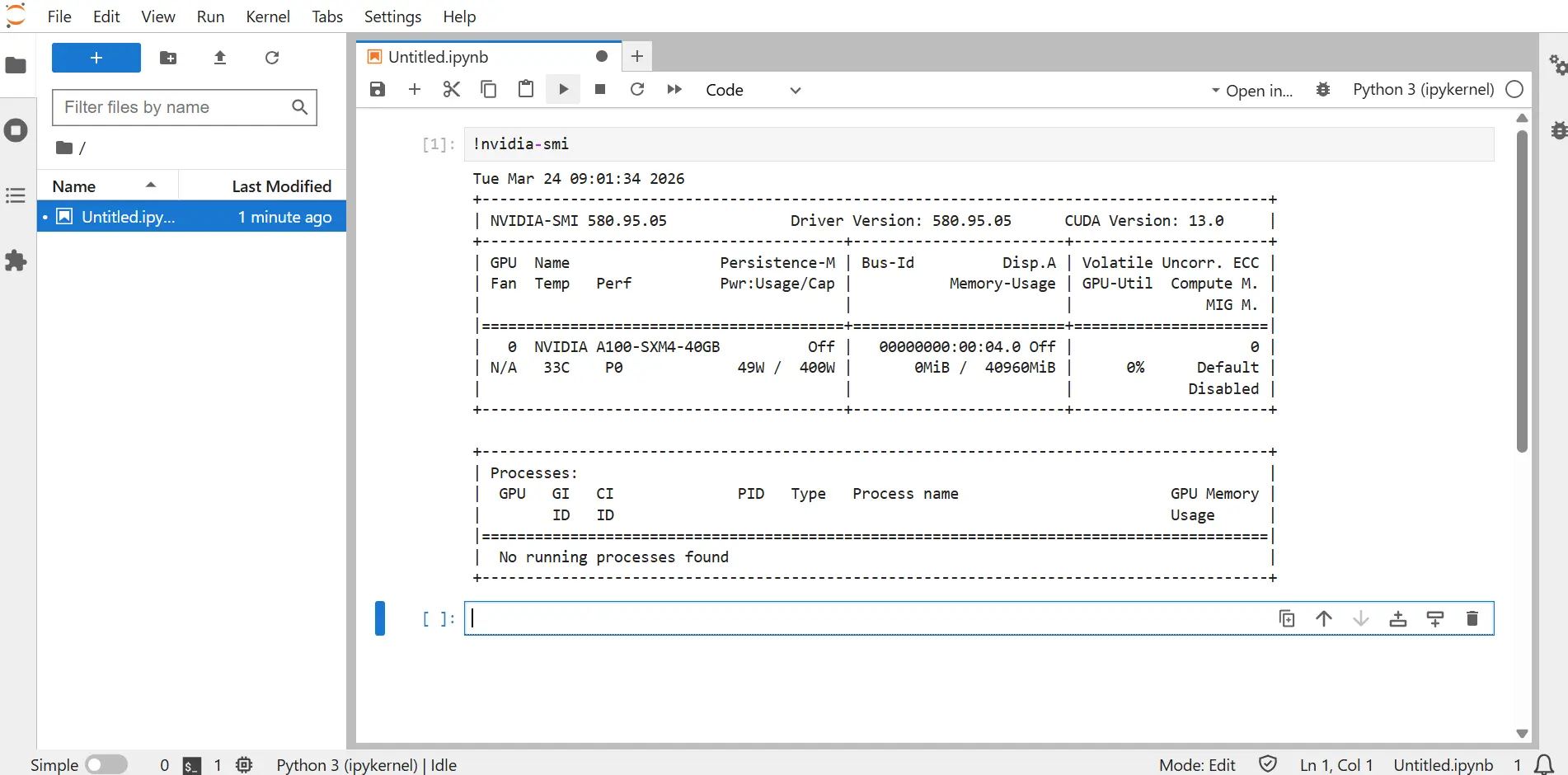

Open a notebook (e.g., click Python 3 (ipykernel)).

-

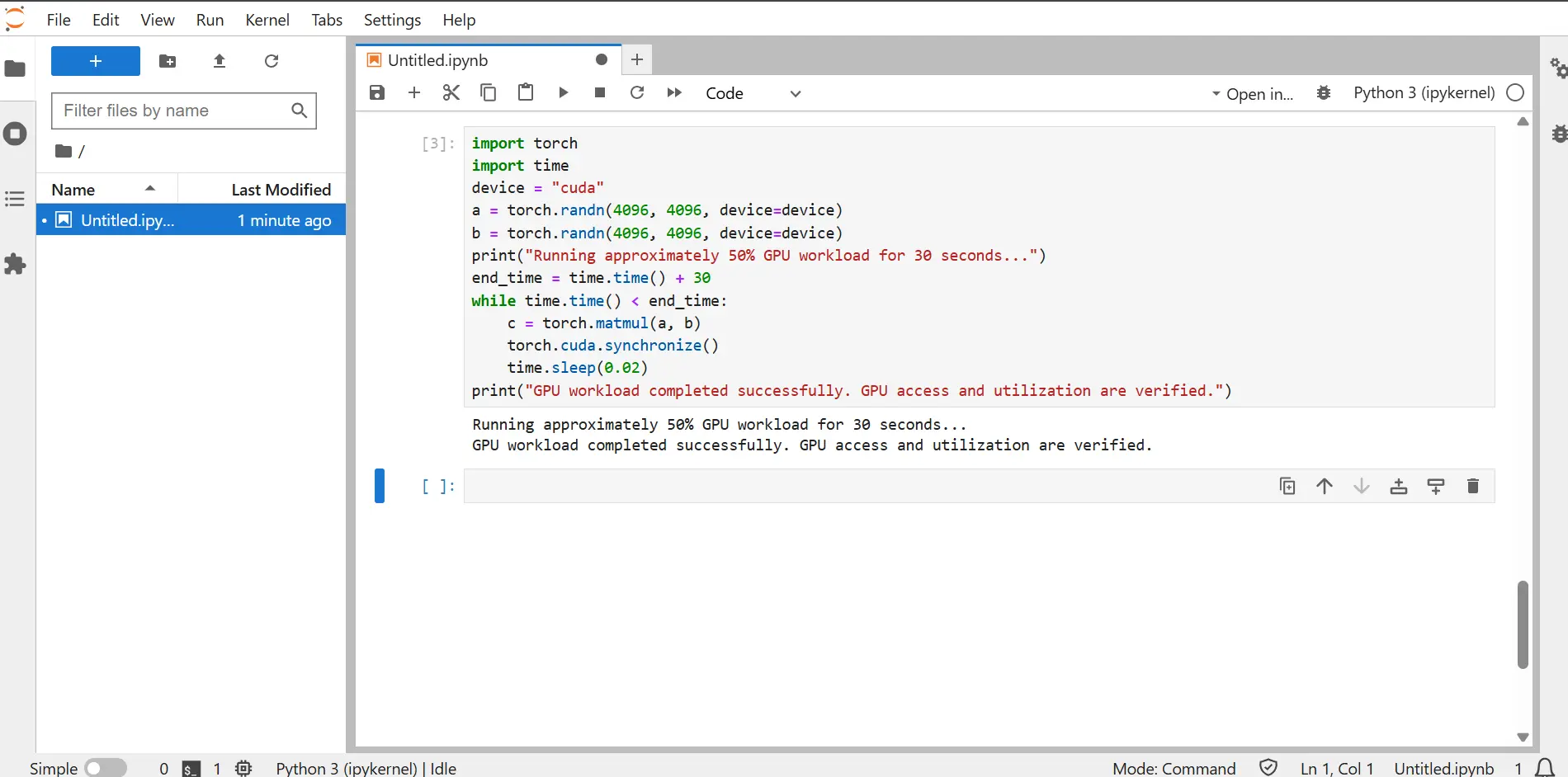

In a cell, run

!nvidia-smito confirm that the GPU is visible.

-

(Optional) To verify GPU utilization, install PyTorch and NumPy, then run the script below.

pip install torch numpyRun this in a notebook cell:

import torch

import time

device = "cuda"

a = torch.randn(4096, 4096, device=device)

b = torch.randn(4096, 4096, device=device)

print("Running approximately 50% GPU workload for 30 seconds...")

end_time = time.time() + 30

while time.time() < end_time:

c = torch.matmul(a, b)

torch.cuda.synchronize()

time.sleep(0.02)

print("GPU workload completed successfully. GPU access and utilization are verified.")On success, you should see the completion message in the cell output.