NVIDIA Spectrum-X

Bridge supports the NVIDIA Spectrum-X Reference Architecture (RA) v1.3, providing automated management of Spectrum-X Ethernet switch fabrics for AI cloud deployments. This includes topology discovery, underlay configuration, multi-tenant overlay networks, and integrated observability for NVIDIA Spectrum-4 SN5000 Series switches, NVIDIA Cumulus Linux, and NVIDIA BlueField-3 SuperNICs and DPUs.

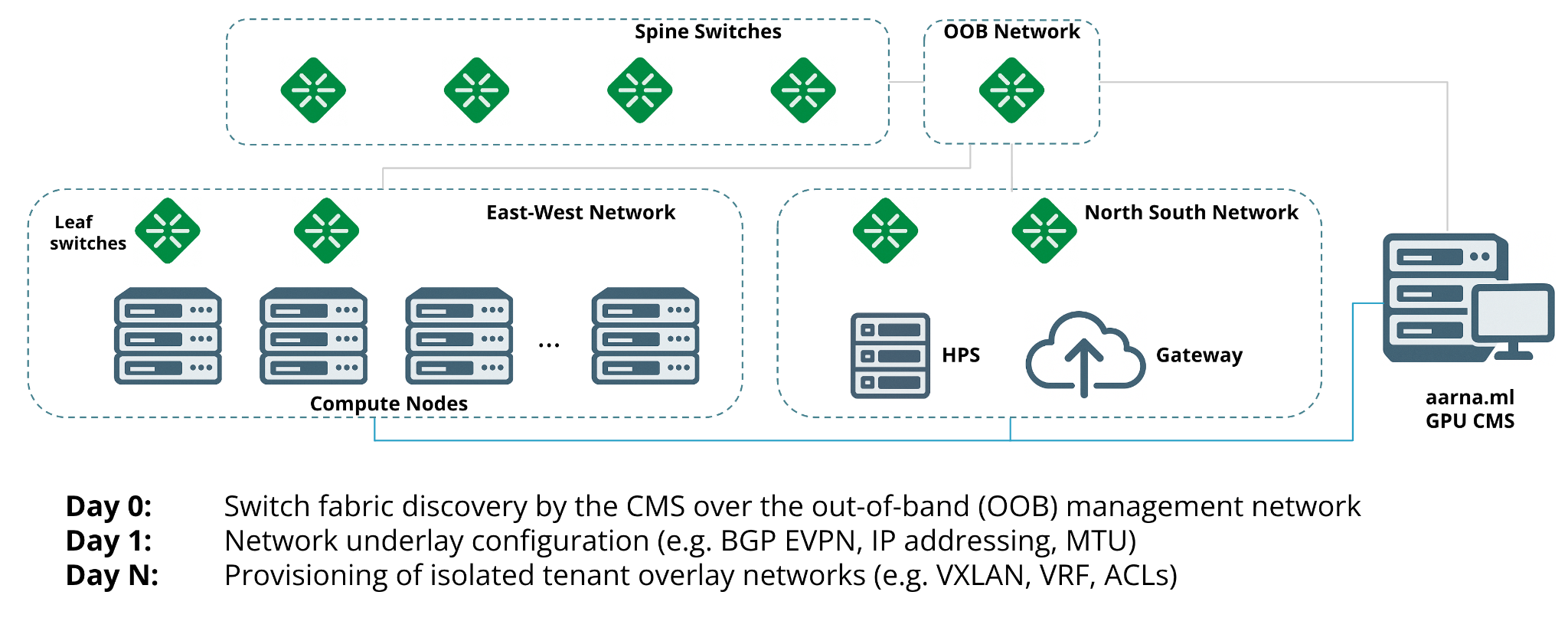

Switch Fabric Architecture

A modern GPU data center switch fabric consists of two primary network segments:

| Network | Purpose |

|---|---|

| East-West (Compute) | GPU-to-GPU communication within and across HGX nodes |

| North-South (Converged) | In-band management, storage access, external/tenant connectivity |

A single Scalable Unit (SU), as defined by the NVIDIA Networking Reference Architecture, contains:

- 32 GPU nodes

- 12 switches

- 256 physical cable connections

This topology serves 256 GPUs. Managing such fabrics manually — especially across multiple SUs — is operationally impractical at scale.

Switch Fabric Automation

Bridge automates the full lifecycle of Spectrum-X switch fabric management:

| Capability | Description |

|---|---|

| Topology discovery | Automatically discovers all switches, compute nodes, and links from switch fabric MAC addresses |

| Underlay configuration | Configures BGP, IP addressing, and loopback interfaces across the fabric |

| Lifecycle automation | Manages switch configuration updates and drift remediation |

| Overlay network creation | Provisions tenant-isolated VxLAN/VRF overlay networks on demand |

| Compute pipeline integration | Triggers network configuration as part of compute resource allocation flows |

This enables intent-driven, scalable management of switch fabrics so that GPU resources remain performant, isolated, and easy to provision.

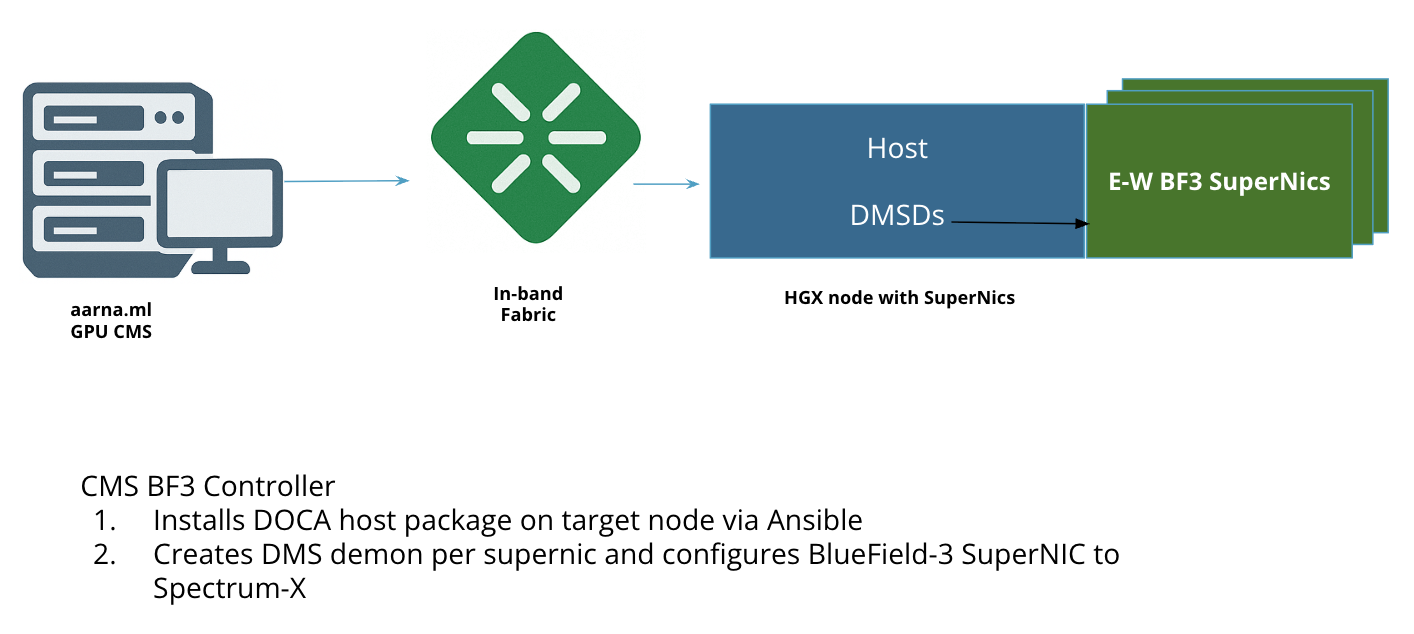

BlueField-3 SuperNIC Configuration

Bridge automates all tasks required to turn a BlueField-3–equipped server into a Spectrum-X host:

- Install DOCA Host Packages — Bridge installs the DOCA Host Packages on the server, enabling key services and libraries.

- Start DOCA Management Service Daemon (DMSD) — Bridge brings up DMSD, which runs on the host and acts as the control point for the SuperNIC.

- Configure Spectrum-X capabilities — Using DMSD, Bridge configures the BlueField-3 SuperNIC with:

- RoCE (RDMA over Converged Ethernet)

- Congestion control

- Adaptive routing

- IP routing

After this process, the host is fully Spectrum-X aware and ready for high-performance GPU-to-GPU networking. Multi-tenancy is enforced at the network level using BGP EVPN from Cumulus Linux and Spectrum-X switches.

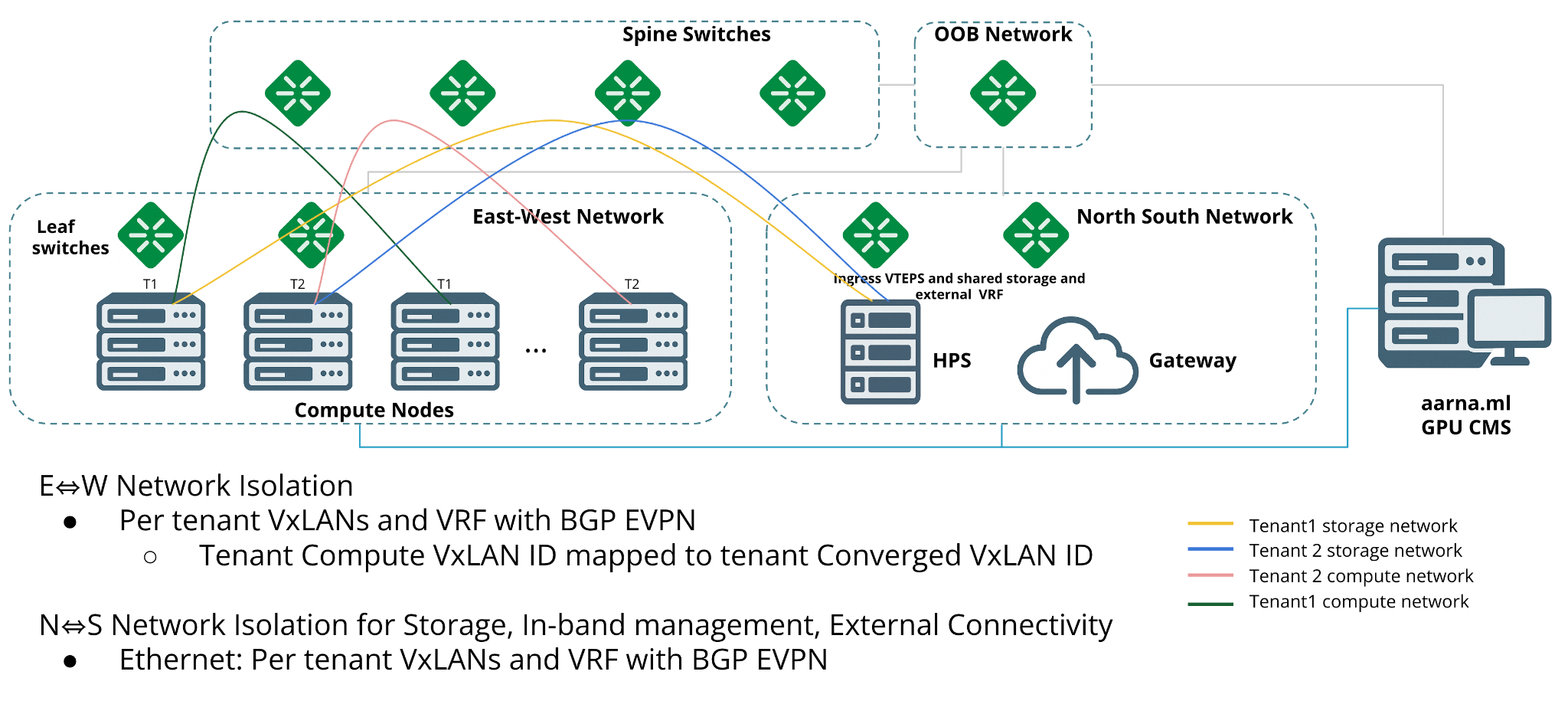

Network Multi-Tenancy

Bridge creates tenant-isolated overlay networks on the Ethernet switch fabric using VxLAN and VRF with BGP as the control plane. From the tenant perspective, this is surfaced as Virtual Private Clouds (VPCs) with multiple subnets, consistent with public cloud semantics.

Internally, each VPC maps to:

| Abstraction | Implementation |

|---|---|

| VPC | Isolated VRF (Virtual Routing and Forwarding) instance |

| Subnet | Unique VxLAN segment |

Bridge uses NVIDIA Cumulus Linux on Spectrum-X switches, which exposes programmable network primitives via the NVIDIA User Experience (NVUE) command interface. As part of VPC provisioning, Bridge dynamically configures VRFs, VXLANs, VLANs, and BGP sessions on the switch fabric through NVUE commands.

VxLAN and VRF-Based Tenant Segmentation

Bridge uses VxLAN combined with VRF constructs to support multiple tenants on the same physical fabric with complete L2/L3 isolation. Each tenant's workloads operate within a dedicated overlay network backed by a separate VRF instance, enabling:

- Independent routing policies per tenant

- Security boundary enforcement between tenants

- Full traffic segmentation without performance degradation

Storage Network Isolation

Bridge provisions dedicated VRFs for storage traffic, ensuring that each tenant's storage access — whether NFS, NVMe over Fabric, or object storage gateways — runs on isolated, separately tunable network paths.

In-Band Network Isolation

In-band management, control plane signaling, and monitoring traffic is handled through logically separate paths within the Spectrum-X fabric using the same VxLAN/VRF segmentation model, while maintaining full tenant isolation.

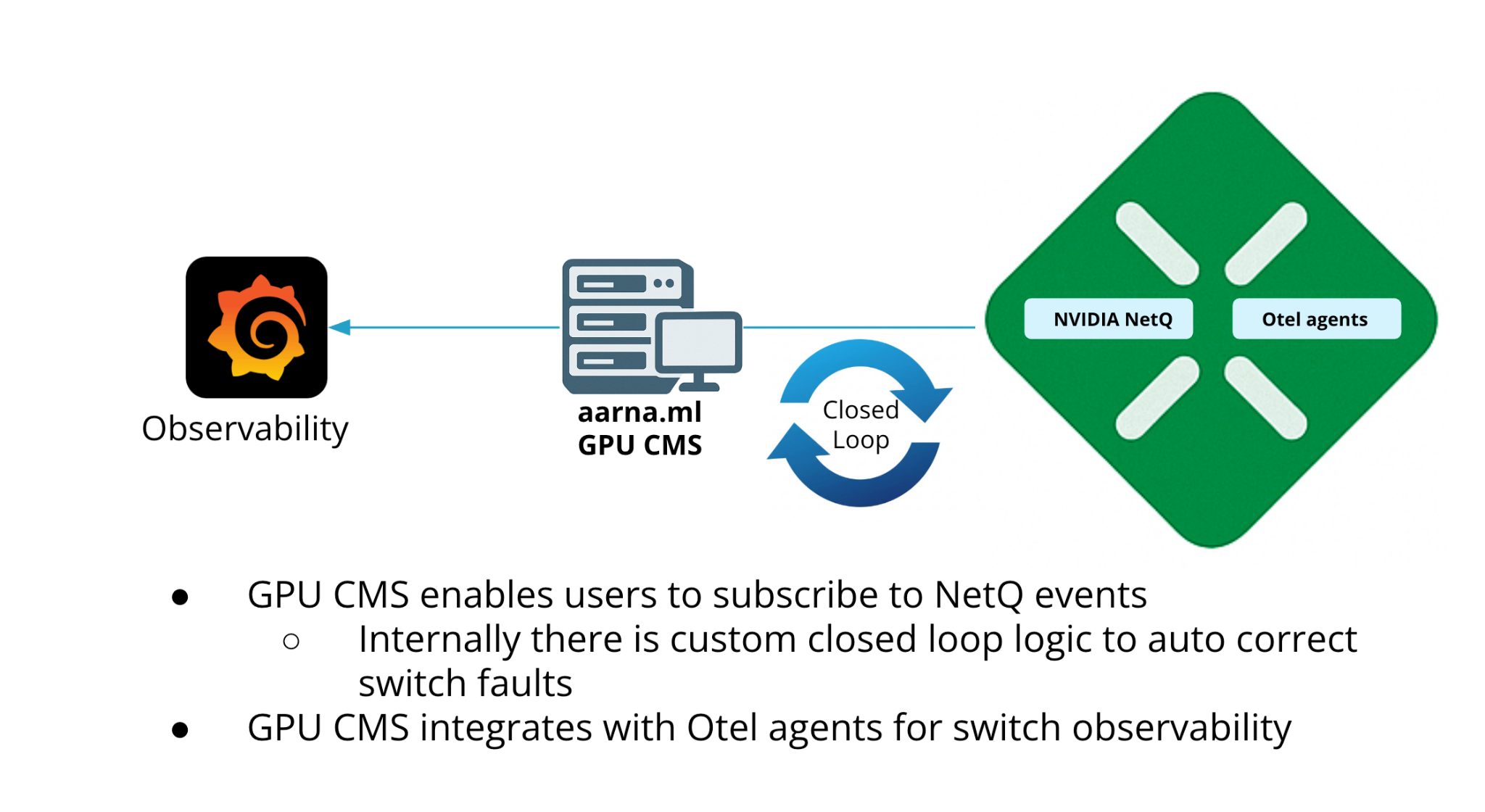

Observability and Fault Management

Bridge supports both NetQ-based and OTLP-based telemetry for NVIDIA Spectrum-X switches. The OTLP integration operates independently of NetQ.

OTLP Telemetry

Cumulus Linux supports OpenTelemetry (OTEL) export using the OpenTelemetry Protocol (OTLP), enabling switch metrics to be exported to external collectors.

Switch telemetry via Cumulus NVUE:

NVIDIA Cumulus Linux on Spectrum-X switches exports the following metrics via NVUE in OTEL format:

| Metric Category | Examples |

|---|---|

| Buffer metrics | Buffer occupancy histograms |

| Interface metrics | Bandwidth, errors, drops |

| Platform metrics | Temperature, fan speed, power consumption |

SuperNIC telemetry via DOCA DTS:

The DOCA Telemetry Service (DTS) collects real-time SuperNIC metrics using the DOCA Telemetry library, supporting:

- High-Frequency Telemetry (HFT)

- Programmable Congestion Control (PCC)

- AMBER counters, ethtool counters, and sysfs metrics

NetQ Integration

Bridge integrates with NVIDIA NetQ for event-driven fault detection and auto-remediation. The NCP Admin can subscribe to NetQ events from within Bridge. When a subscribed event is triggered, Bridge invokes remediation logic to auto-correct faults without manual intervention.

Bridge subscribes to and auto-corrects the following fault scenarios:

| Fault Type | Detection and Response |

|---|---|

| Switch failure | Power loss or hardware faults trigger alerts and automated mitigation workflows |

| Link failure | Physical interface failures, port errors, and degradation are identified in real time |

| Configuration drift | Interface resets or unauthorized modifications are continuously audited and flagged |

| BGP session state | BGP flaps and neighbor disconnects are monitored to maintain routing stability |

Topology Validation

The NCP Admin can trigger NetQ topology validation from within Bridge to verify that the discovered switch fabric matches the intended topology design. This confirms consistency between the actual network state and network intent, and identifies discrepancies before they affect workloads.

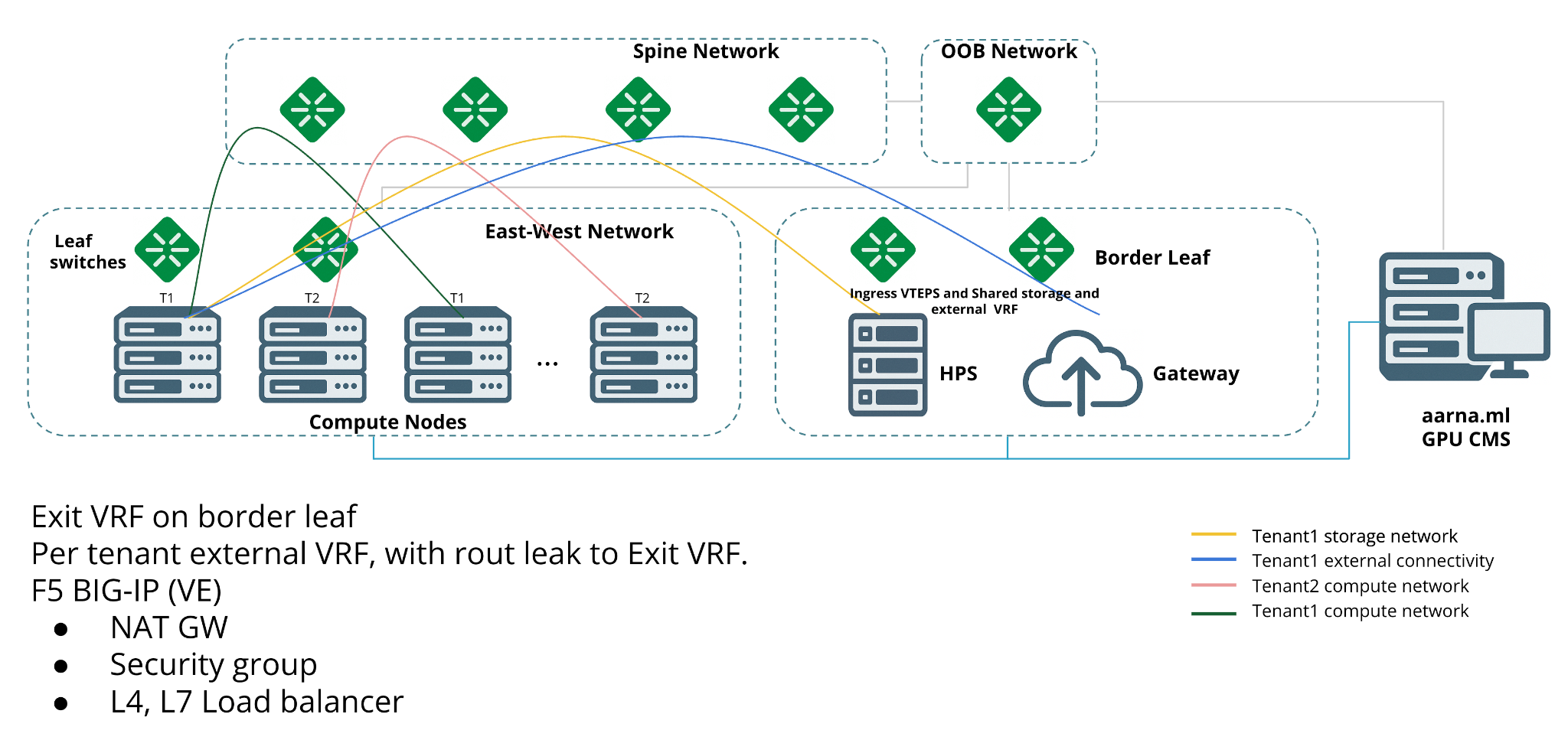

External Connectivity

Bridge provides external connectivity in conjunction with F5 BIG-IP Virtual Edition. At the edge of each tenant's network, border leaf switches enforce routing policies, external access controls (such as NAT and firewall rules), and traffic shaping.

Bridge automatically configures border leaf switches to bridge the isolated VxLAN/VRF overlays with tenant-owned infrastructure or shared external services, supporting hybrid-cloud network extensions.

External connectivity via border leaf and F5 BIG-IP is outside the scope of the NVIDIA Spectrum-X Reference Architecture but is supported by Bridge as an extension of the RA.