JupyterHub with KAI Scheduler

Overview

The JupyterHub with KAI Scheduler template provisions a Kubernetes cluster with JupyterHub with KAI Scheduler for interactive notebook workloads and scheduled jobs. Use this template when you need a ready-to-use JupyterHub environment on Kubernetes.

Accessing the cluster

Bridge provides two ways to work with your cluster after it is created:

-

Download kubeconfig — You can download the cluster kubeconfig file from the cluster menu. Use this file to access the cluster from your local machine or external tools (e.g.,

kubectl, IDEs, or CI/CD pipelines) by settingKUBECONFIGor merging the file into your default kubeconfig. -

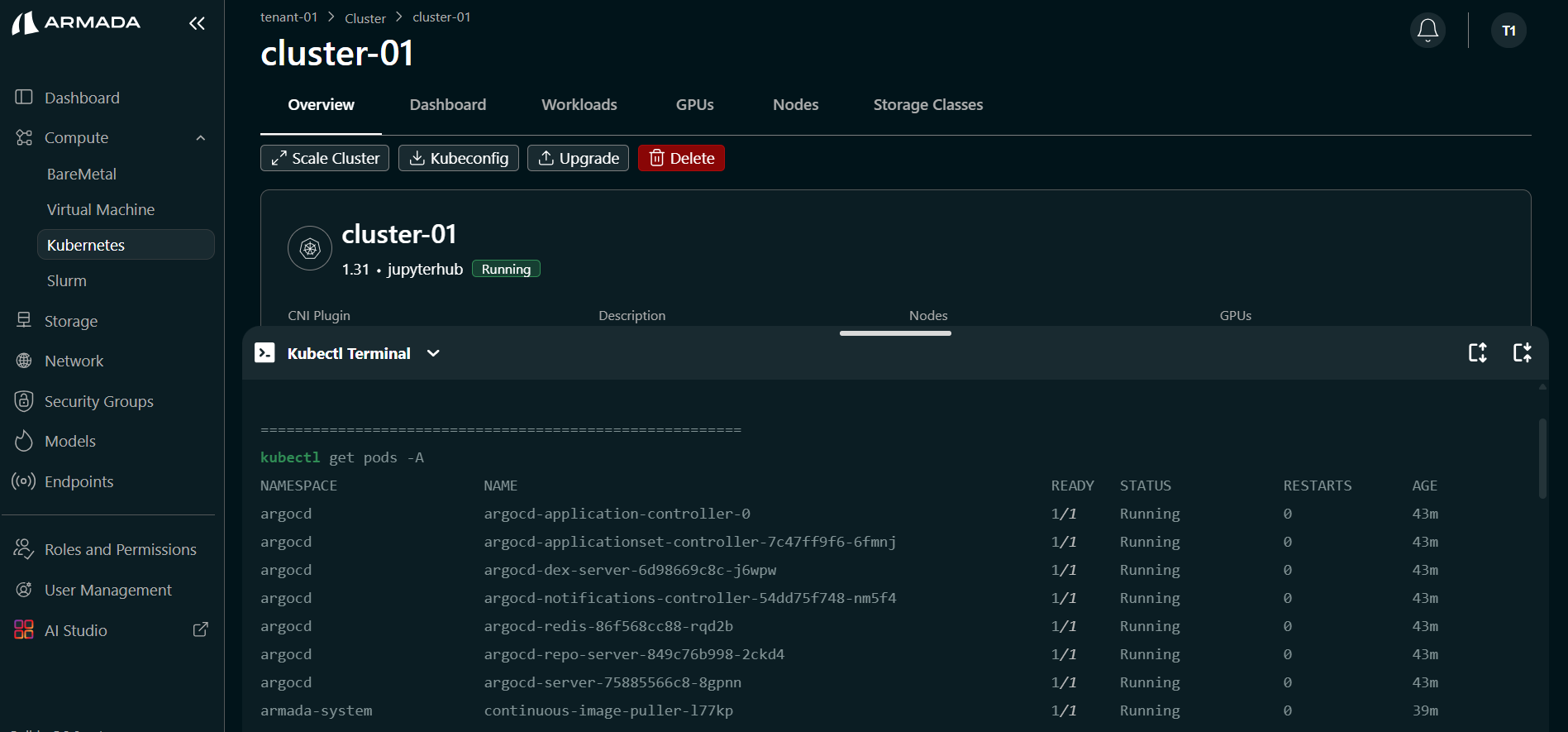

Kubectl Terminal — The Kubectl Terminal feature in Bridge lets you interact directly with the Kubernetes cluster from Bridge UI. You do not need to log in to the cluster separately from an external terminal. With this feature you can:

- Run kubectl commands directly from the UI

- Manage and monitor cluster resources without switching to a separate command-line environment

- Perform cluster operations from Bridge UI and save time

This guide covers:

- Configuring cluster name, version, and CNI

- Selecting the JupyterHub with KAI Scheduler template and hostname

- Selecting nodes and monitoring creation until the cluster is Running

- Downloading kubeconfig, viewing nodes and GPUs, and using the Kubectl Terminal

Prerequisites

- Tenant Admin access — Log in as a Tenant Admin to create clusters.

- Compute resources — Bare Metal or Virtual Machine resources allocated to your tenant.

Create a JupyterHub with KAI Scheduler Cluster

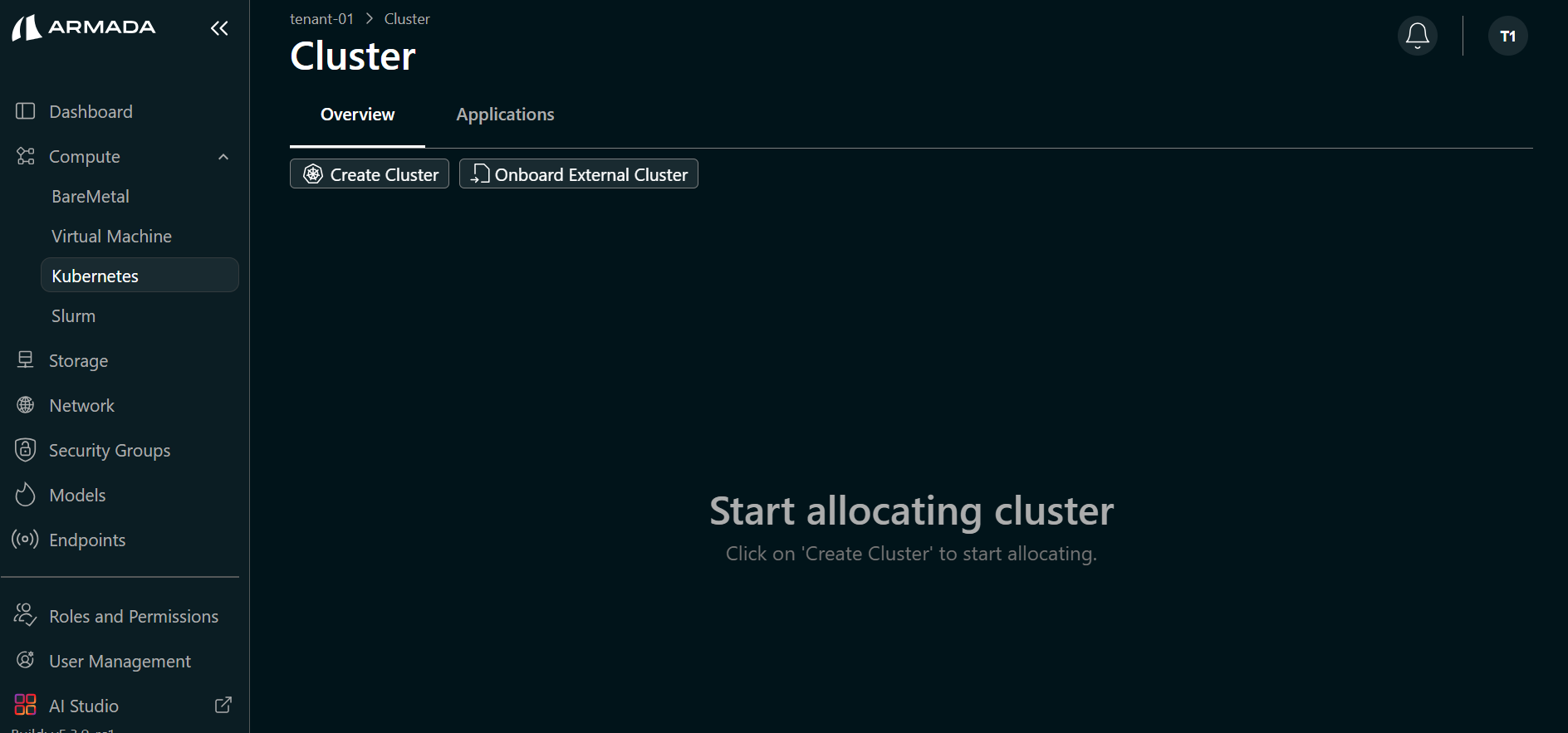

Step 1: Start Cluster Creation

- Log in to Bridge as a Tenant Admin.

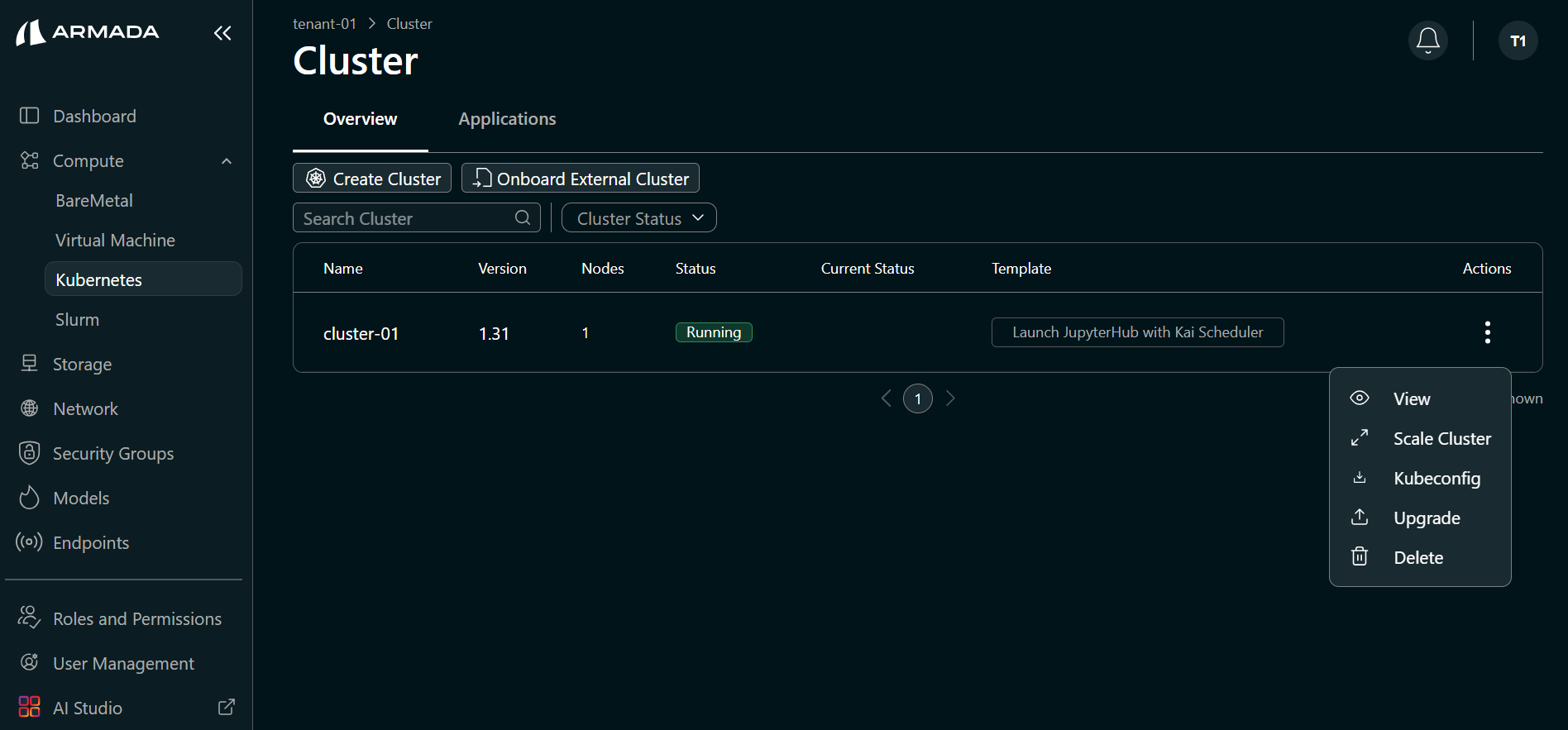

- In the left sidebar, click Compute → Cluster.

- Click Create Cluster.

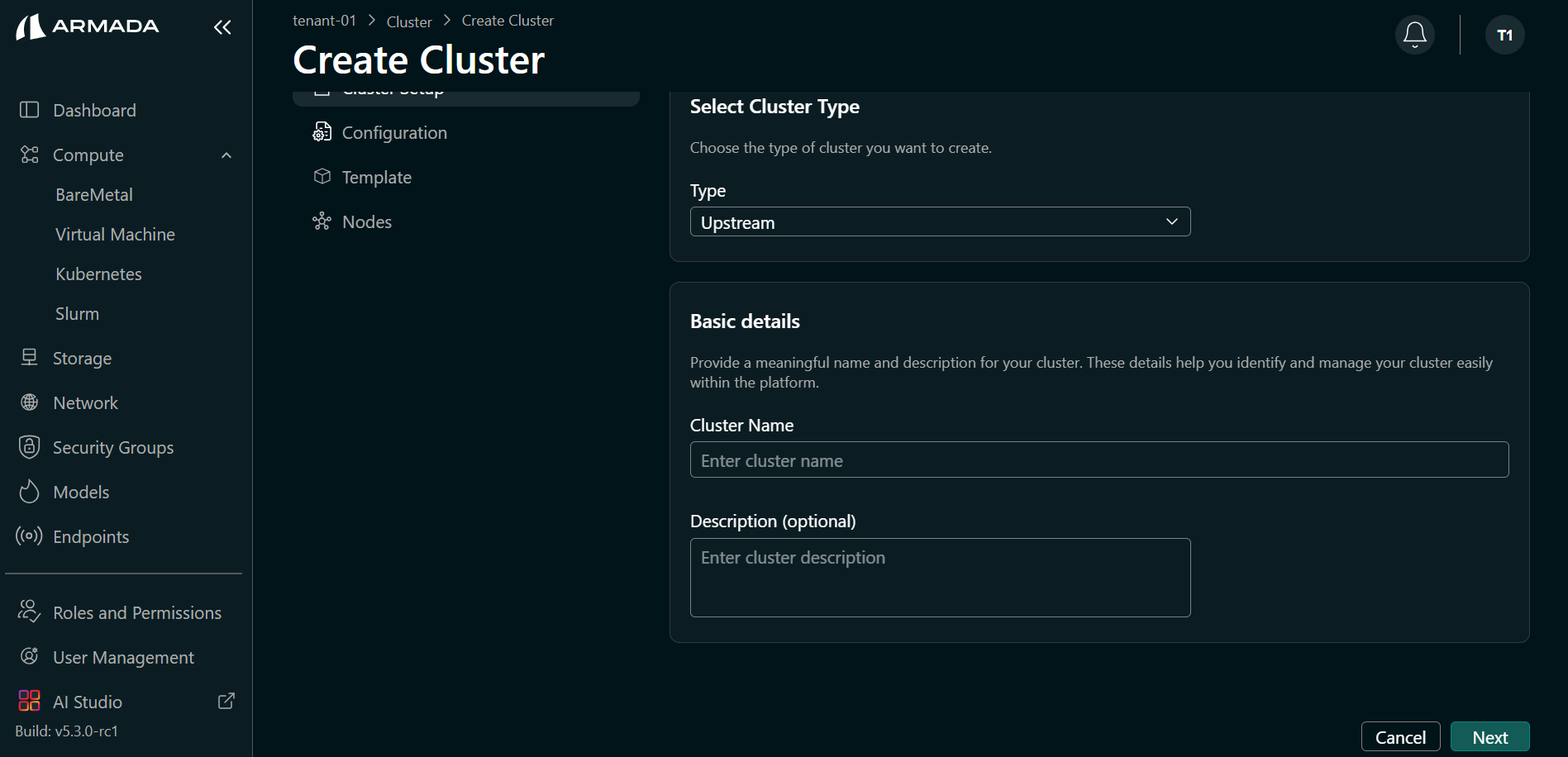

Step 2: Configure Cluster Details

- Enter a name and description for the cluster, then click Next.

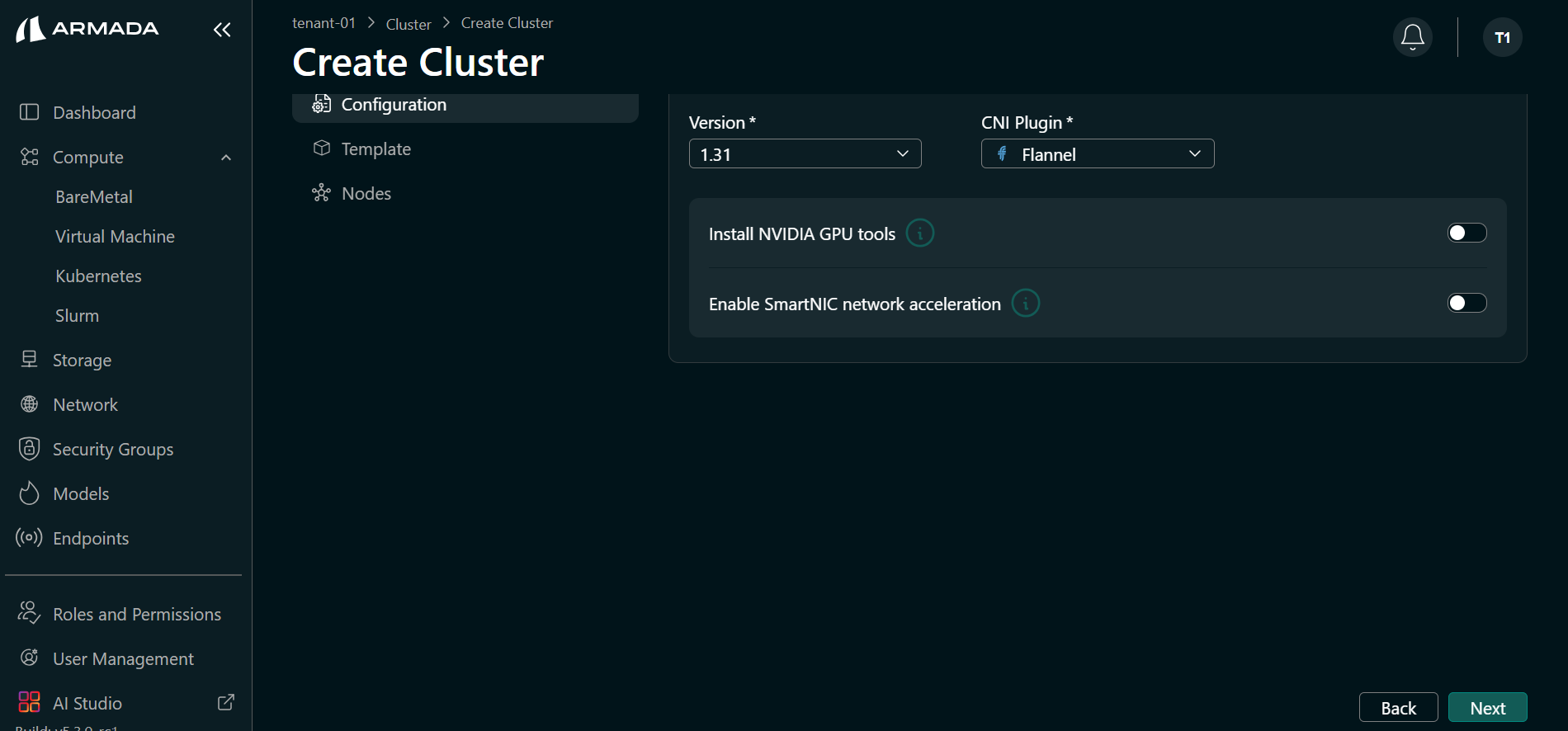

- Select the Kubernetes version.

- Select the CNI plugin. Bridge supports Flannel and Cilium.

- (Optional) Enable Install NVIDIA GPU tools if you want GPU tooling on the cluster.

- Click Next.

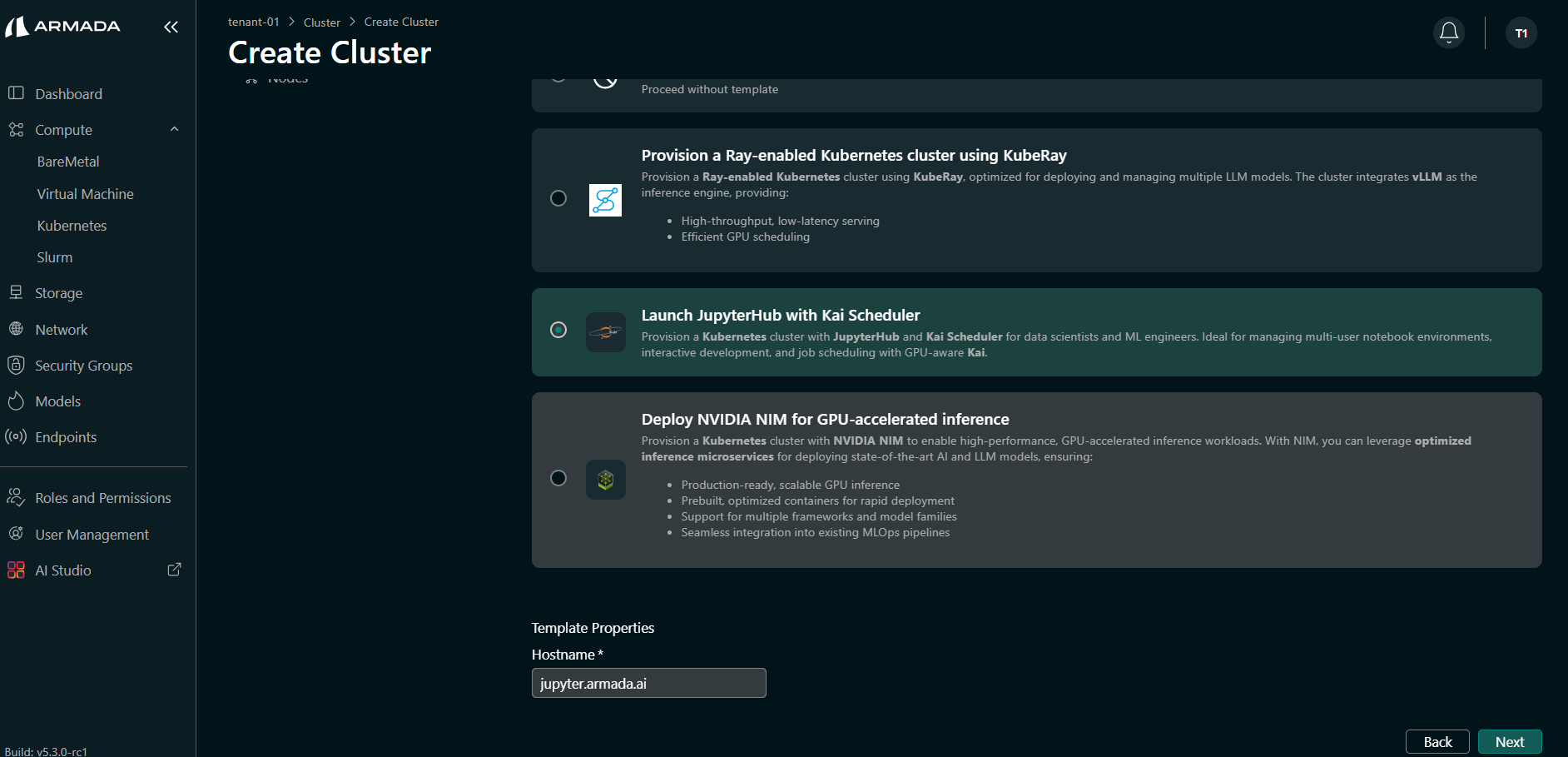

Step 3: Select Cluster Template

- Choose Launch JupyterHub with Kai Scheduler Template.

- Enter the Hostname (for example,

jupyter.armada.ai) as required by your environment. - Click Next.

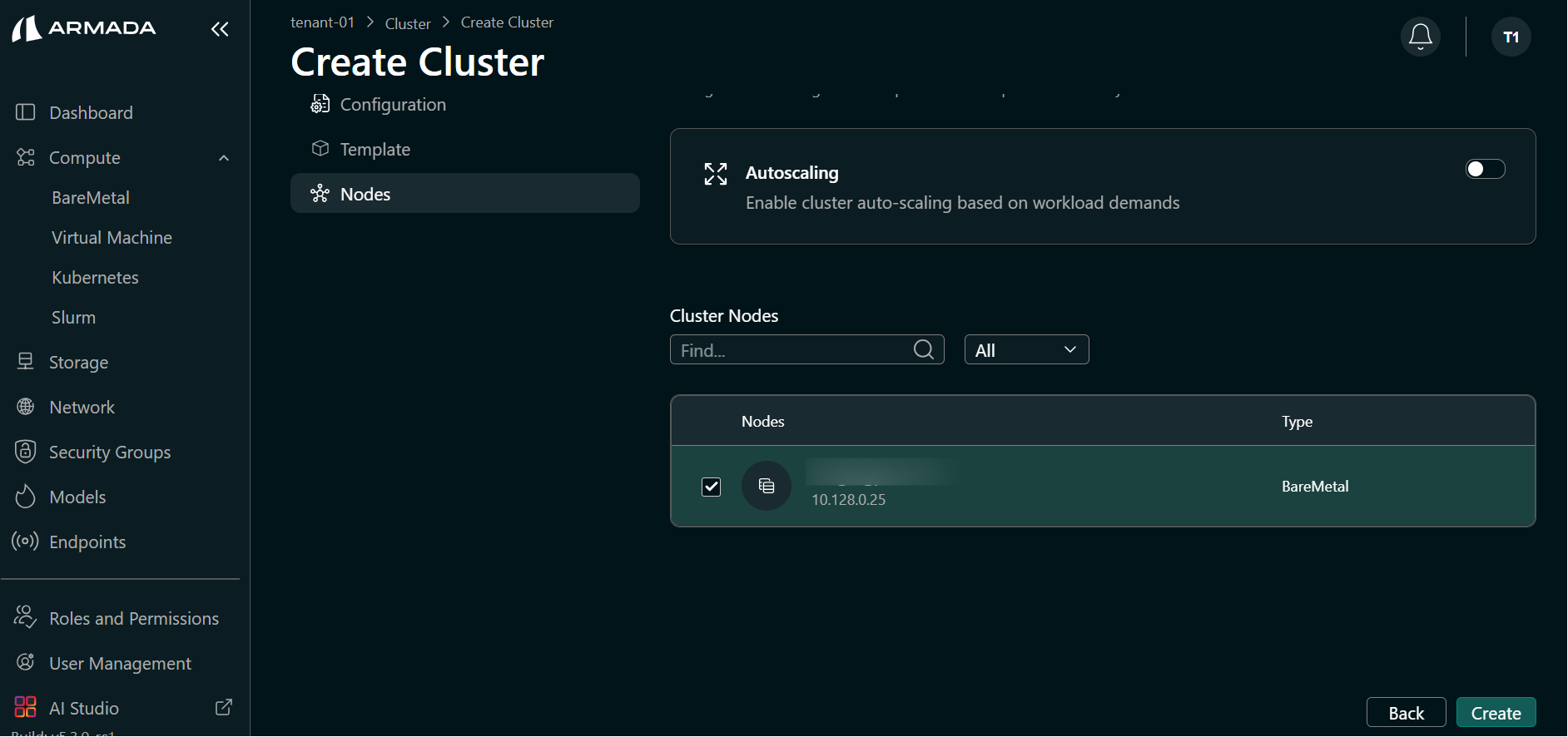

Step 4: Select Nodes and Create

- Select the cluster node(s) (Bare Metal or Virtual Machine).

- Click Create to start cluster creation.

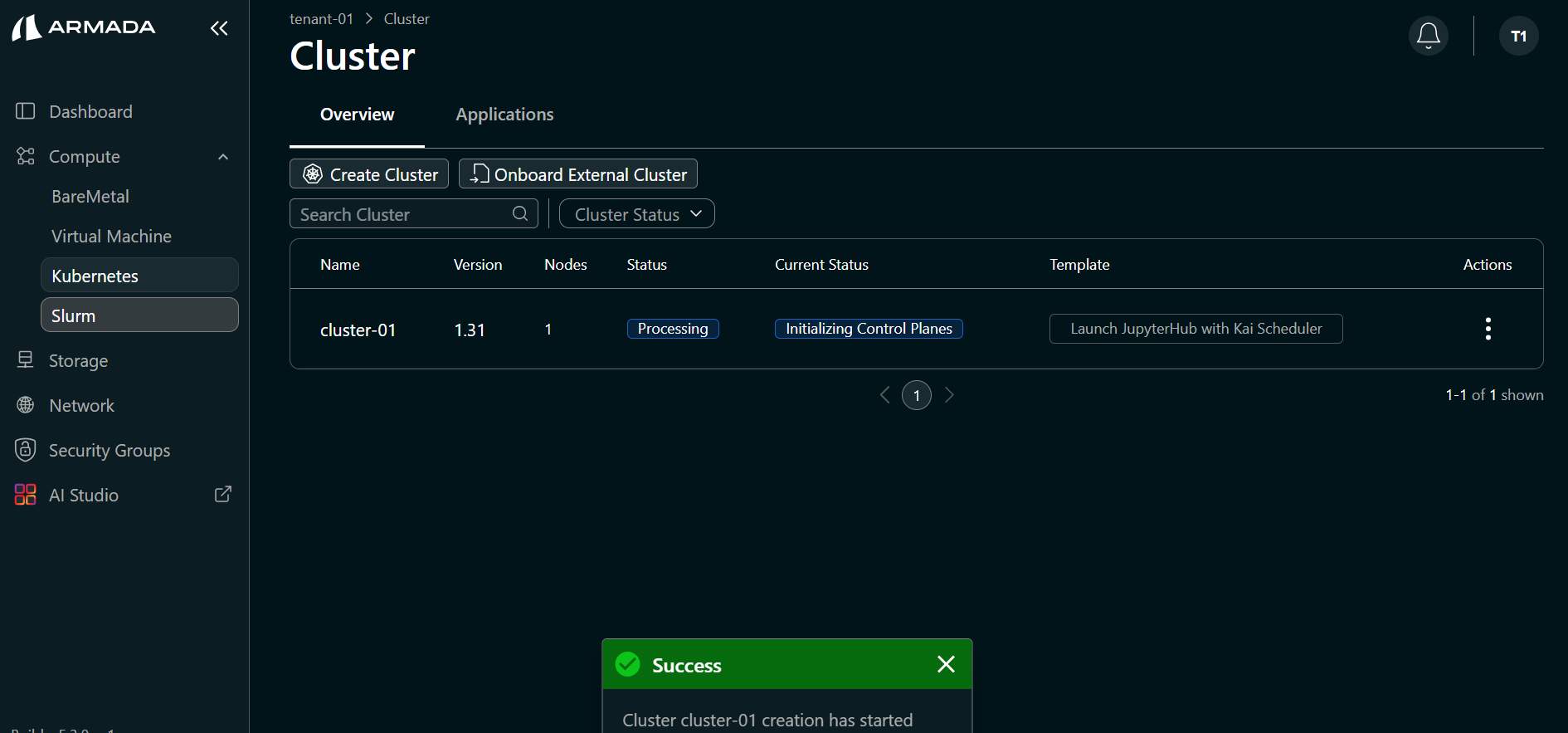

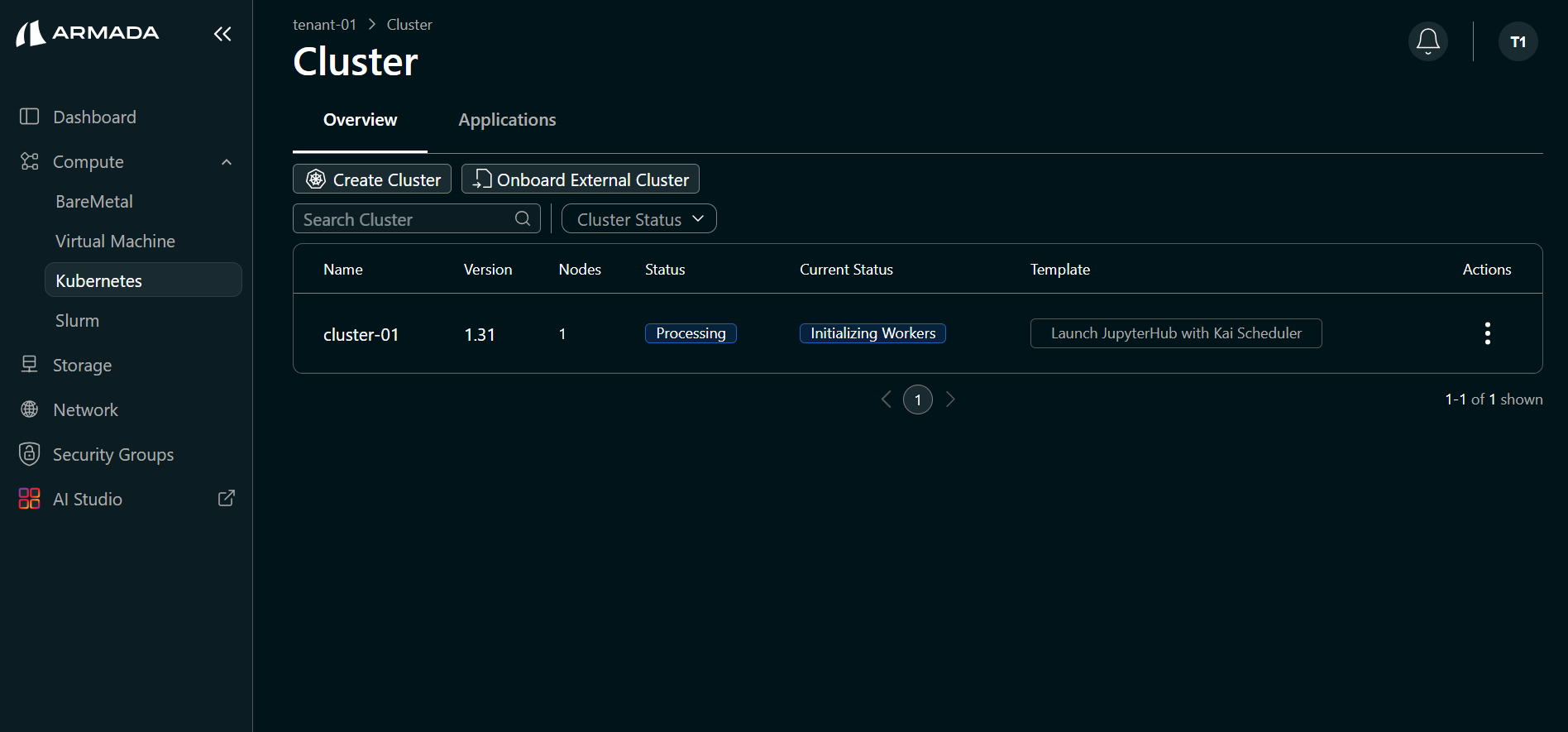

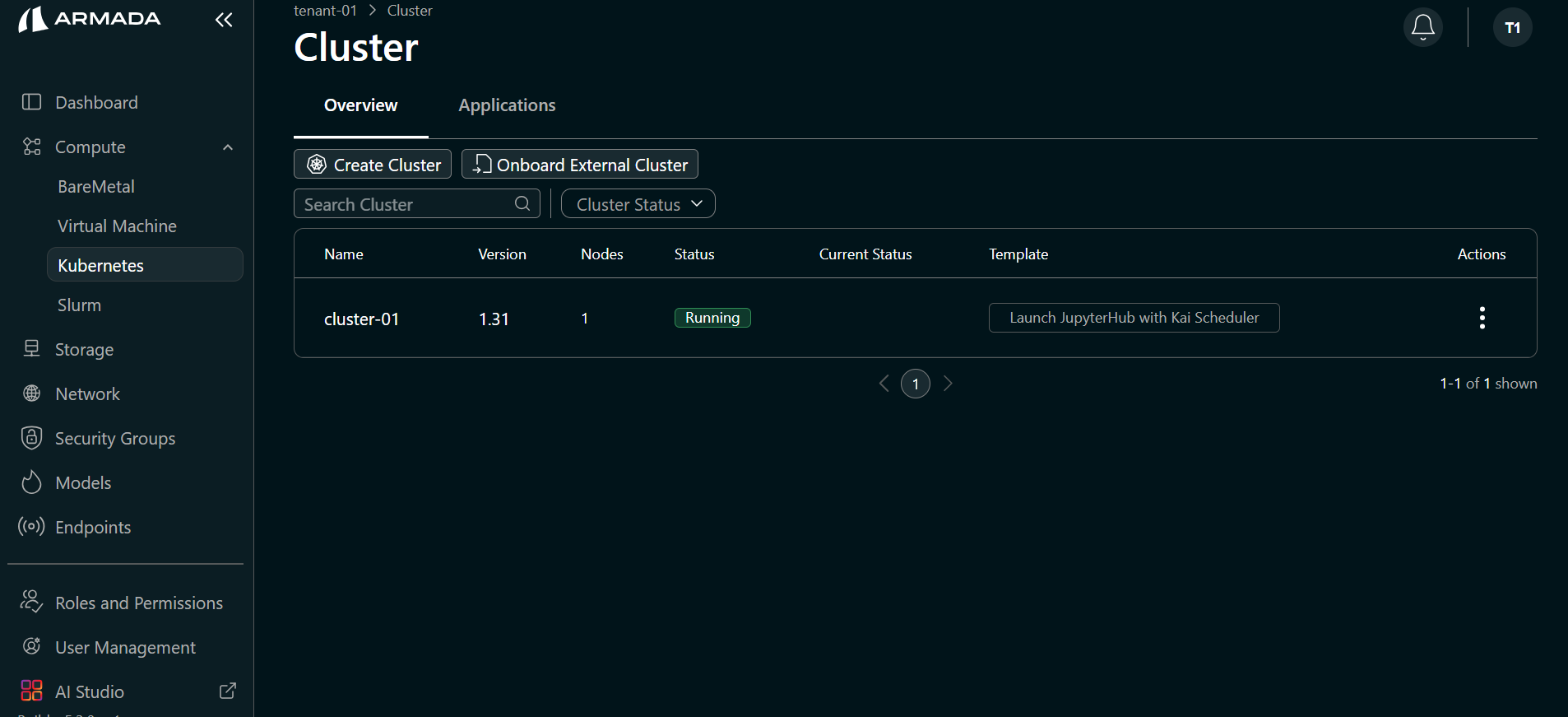

Step 5: Monitor Cluster Creation

Cluster creation runs through several states. Wait until the status is Running.

- Initializing Control Planes — Status shows Processing.

- Initializing Workers — Status remains Processing.

- When creation completes, the Status shows Running.

Step 6: Configure Local Hostname (if DNS is not resolvable)

If the configured hostname is not resolvable through public or internal DNS, add an entry to /etc/hosts on your local machine.

<GPU_VM_Public_IP> <jupyter_hostname>

Replace:

<GPU_VM_Public_IP>with the public IP of the VM/Bare Metal node used for cluster creation<jupyter_hostname>with the hostname configured in Step 3 (e.g.,jupyter.armada.ai)

Step 7: Download Kubeconfig

- Click the menu (ellipsis) icon for the cluster.

- Select Kubeconfig to download the cluster kubeconfig file.

Step 8: View Cluster Details and Access Tools

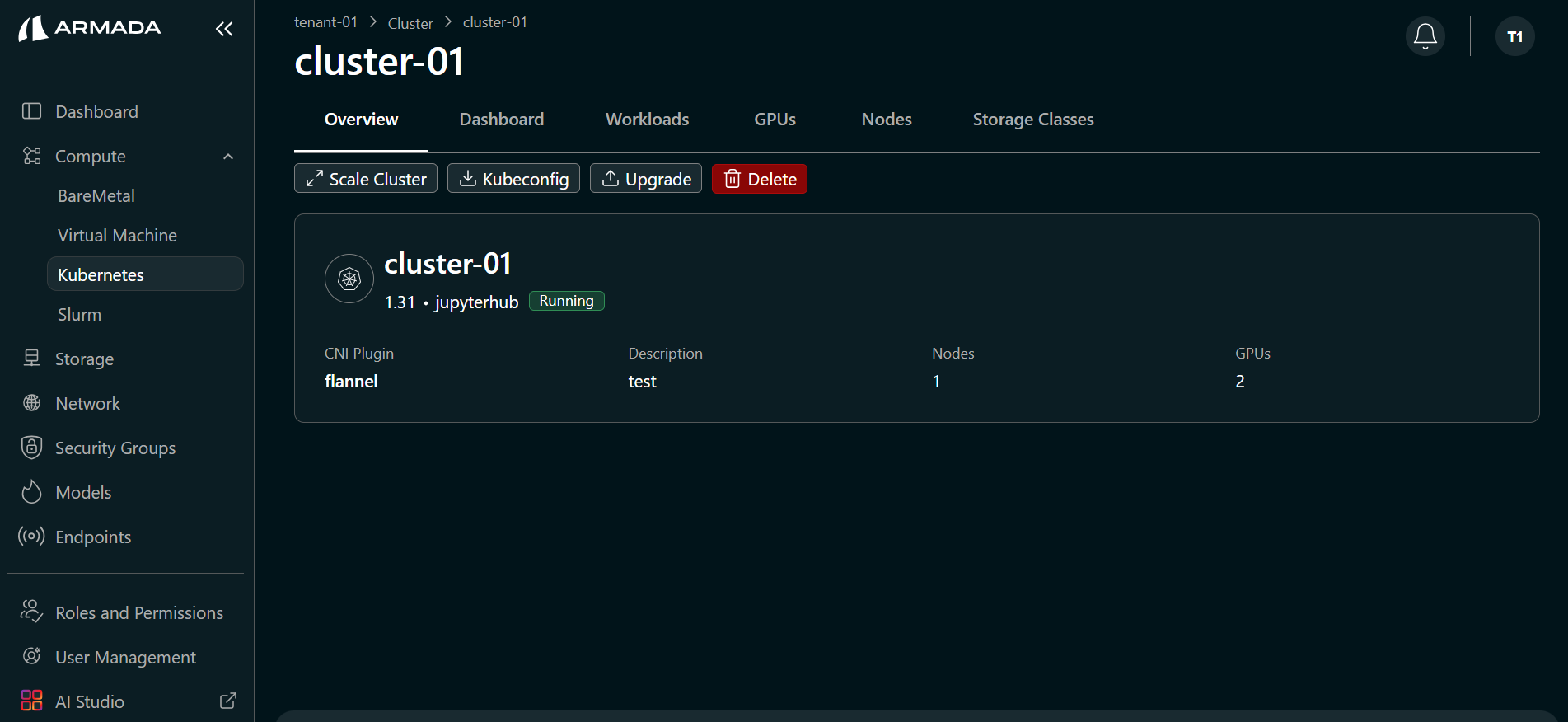

Click the cluster view icon (or cluster name) to open cluster details.

- Overview — Cluster information, and options to scale the cluster, download kubeconfig, access the dashboard, and delete the cluster. The Nodes tab shows details of the nodes allocated to the cluster.

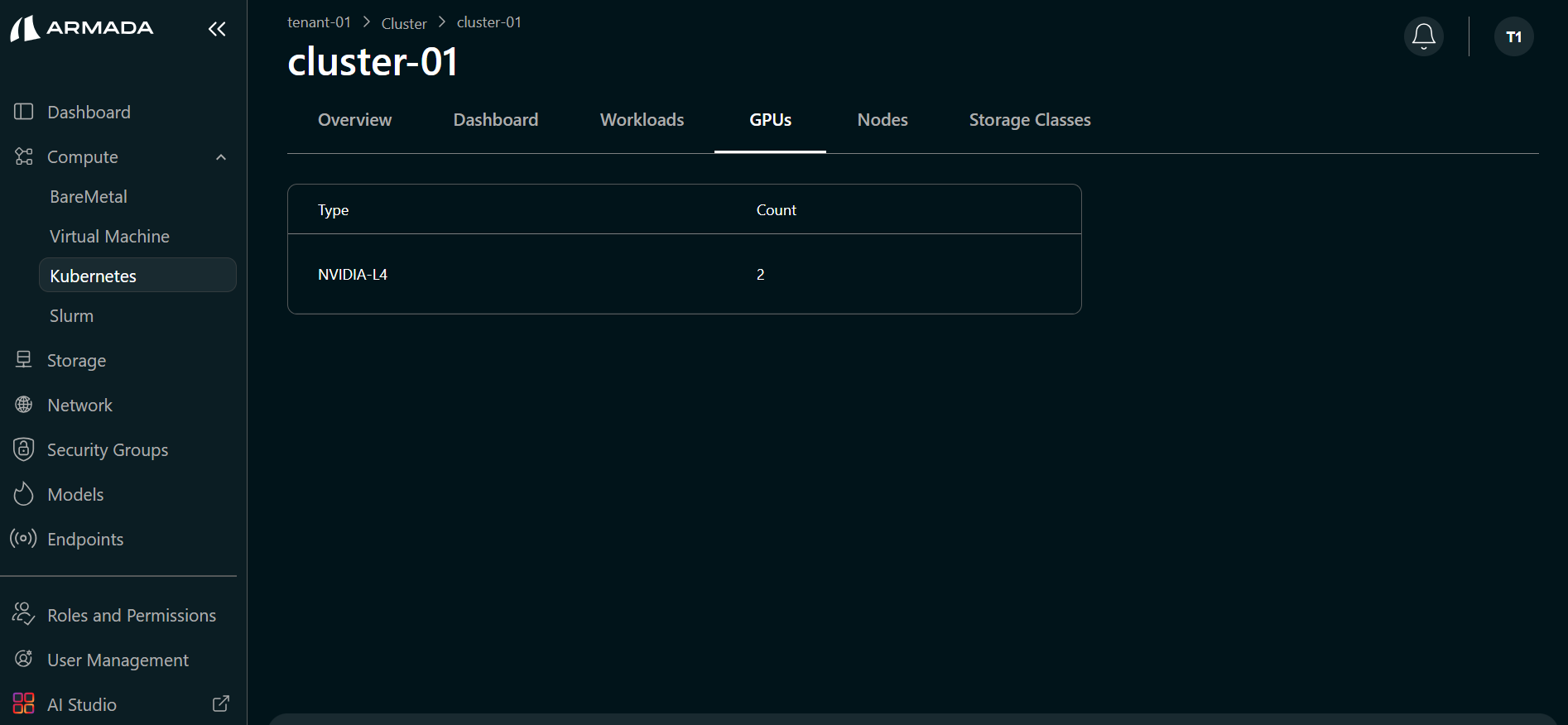

- GPUs — Click the GPUs tab to view allocated GPU details.

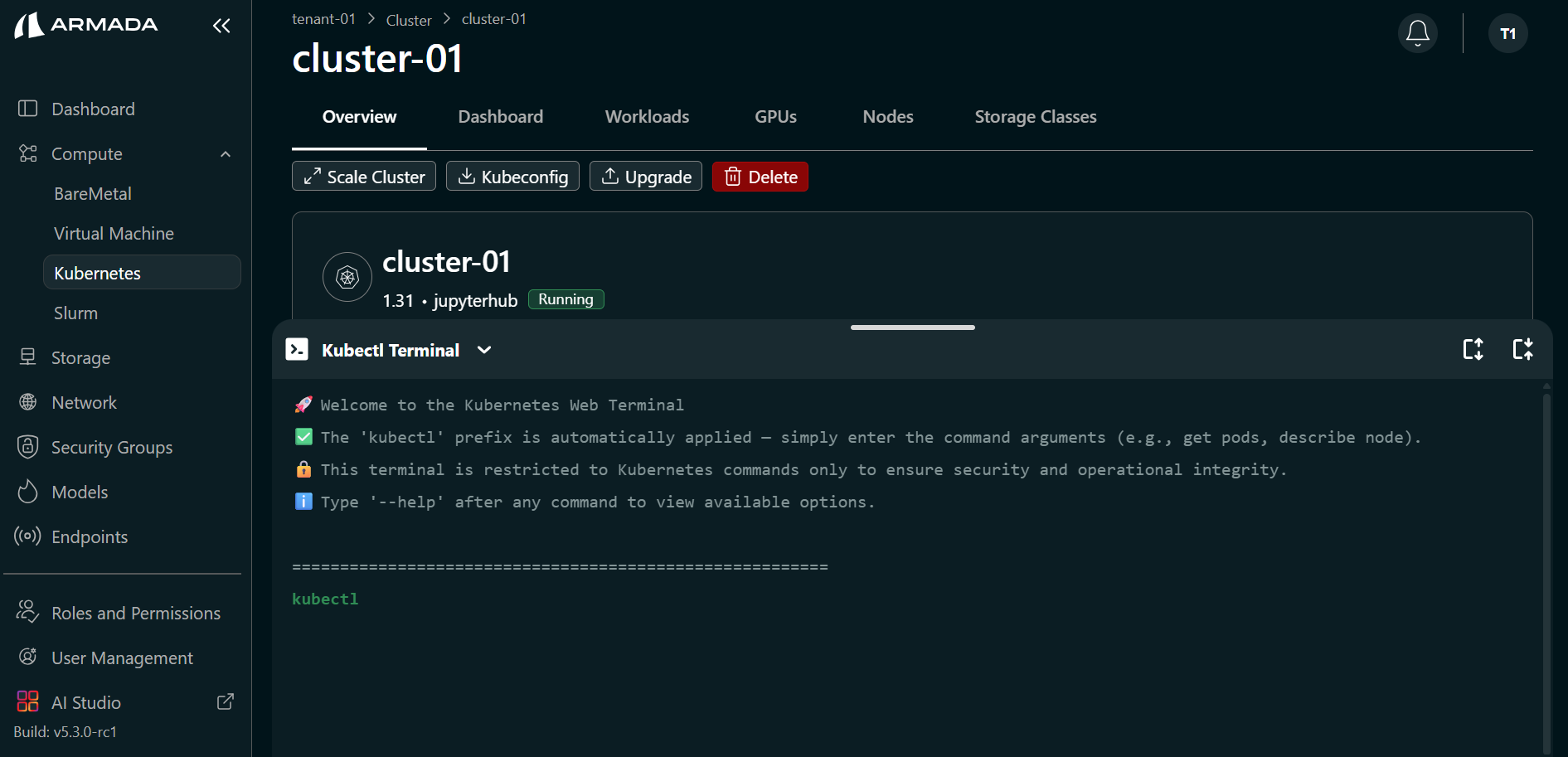

- Kubectl Terminal — Click the Kubectl Terminal (arrow) icon to access the cluster and run kubectl commands.

Ensure all pods are in Running state before using the cluster for workloads. In the Kubectl Terminal, run:

kubectl get pods -A